# openvino_notebooks

**Repository Path**: zhang-quan007/openvino_notebooks

## Basic Information

- **Project Name**: openvino_notebooks

- **Description**: 📚 A collection of Python notebooks for learning and experimenting with OpenVINO 👓

- **Primary Language**: Unknown

- **License**: Apache-2.0

- **Default Branch**: main

- **Homepage**: None

- **GVP Project**: No

## Statistics

- **Stars**: 0

- **Forks**: 9

- **Created**: 2024-03-06

- **Last Updated**: 2024-03-06

## Categories & Tags

**Categories**: Uncategorized

**Tags**: None

## README

English | [简体中文](README_cn.md)

📚 OpenVINO™ Notebooks

[](https://github.com/openvinotoolkit/openvino_notebooks/blob/main/LICENSE)

[](https://github.com/openvinotoolkit/openvino_notebooks/actions/workflows/treon_precommit.yml?query=event%3Apush)

[](https://github.com/openvinotoolkit/openvino_notebooks/actions/workflows/docker.yml?query=event%3Apush)

A collection of ready-to-run Jupyter notebooks for learning and experimenting with the OpenVINO™ Toolkit. The notebooks provide an introduction to OpenVINO basics and teach developers how to leverage our API for optimized deep learning inference.

[OpenVINO™ Notebooks at GitHub Pages](https://openvinotoolkit.github.io/openvino_notebooks/)

[](https://openvinotoolkit.github.io/openvino_notebooks/)

[]()

## 🚀 AI Trends - Notebooks

Check out the latest notebooks that show how to optimize and deploy popular models on Intel CPU and GPU.

| **Notebook** | **Description** | **Preview** | **Complementary Materials** |

| :-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: | :------------------------------------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: |

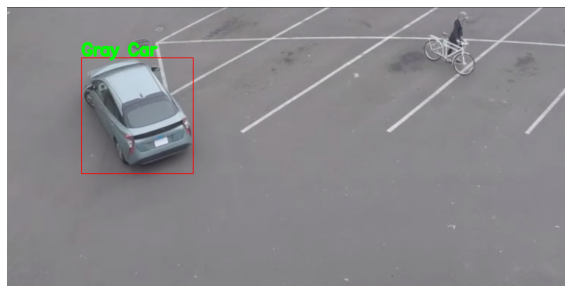

| [YOLOv8 - Optimization:](notebooks/230-yolov8-optimization/)

Object Detection

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/230-yolov8-optimization/230-yolov8-object-detection.ipynb)

Instance Segmentation

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/230-yolov8-optimization/230-yolov8-instance-segmentation.ipynb)

Keypoints Detection

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/230-yolov8-optimization/230-yolov8-keypoint-detection.ipynb) | Optimize YOLOv8 using NNCF PTQ API |  | [Blog - How to get YOLOv8 Over 1000 fps with Intel GPUs?](https://medium.com/openvino-toolkit/how-to-get-yolov8-over-1000-fps-with-intel-gpus-9b0eeee879) |

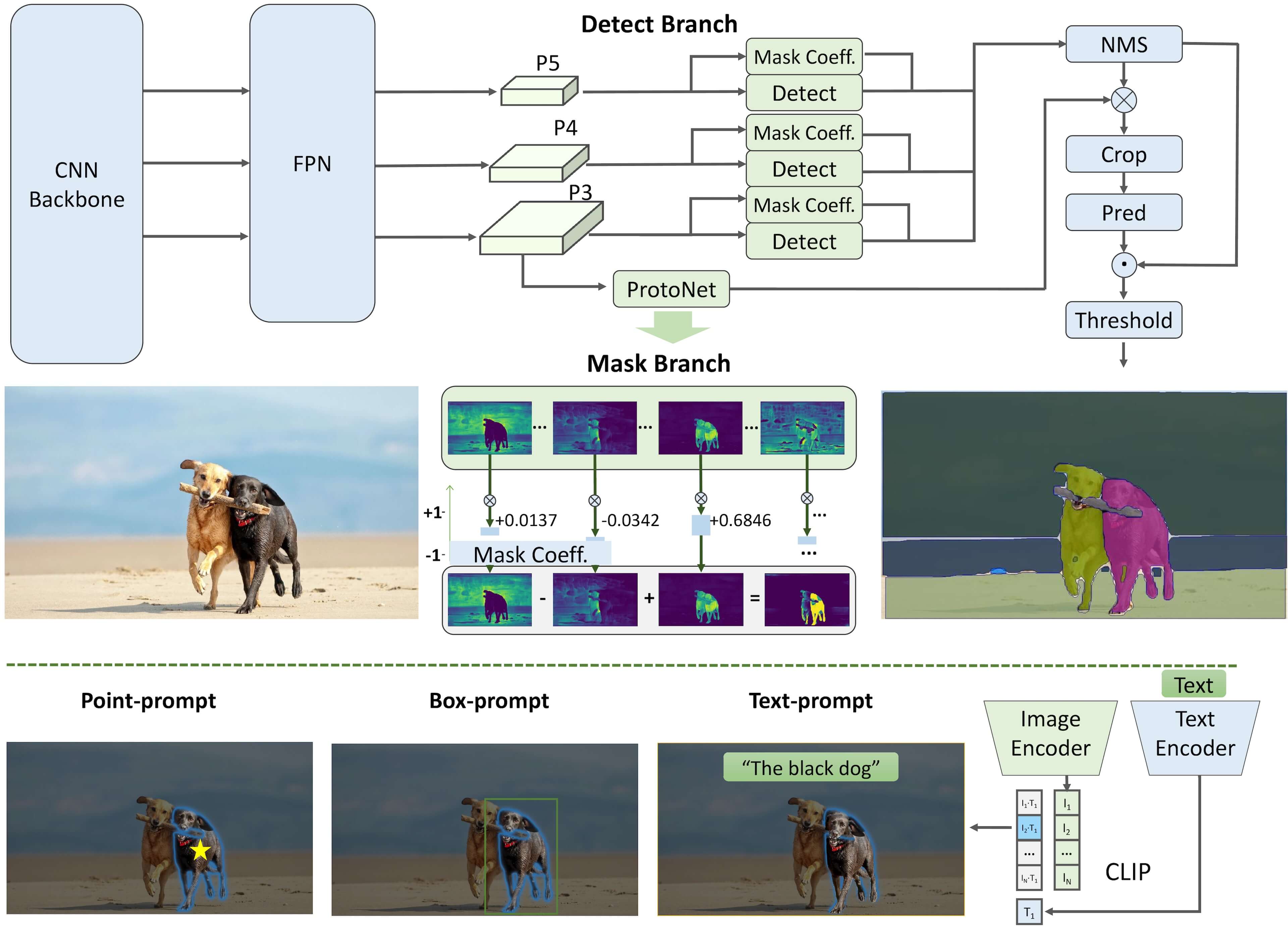

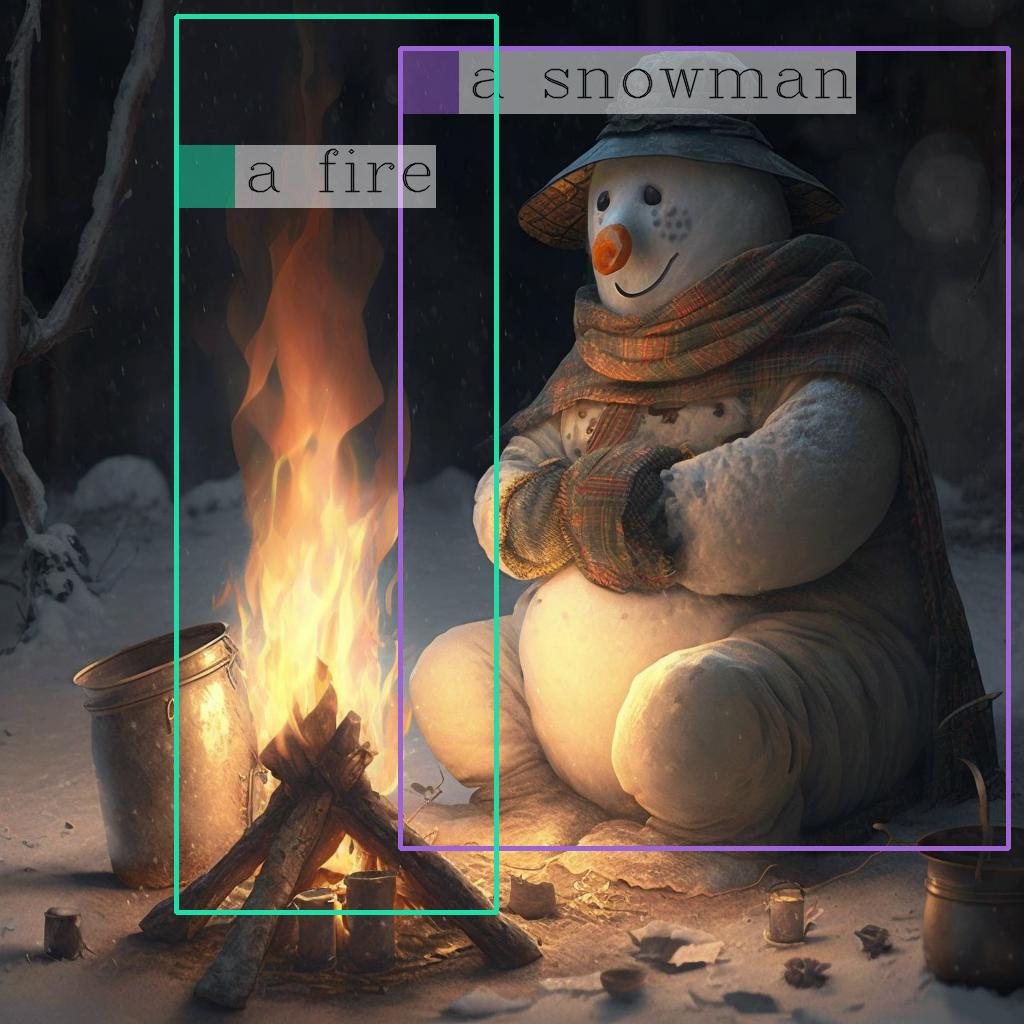

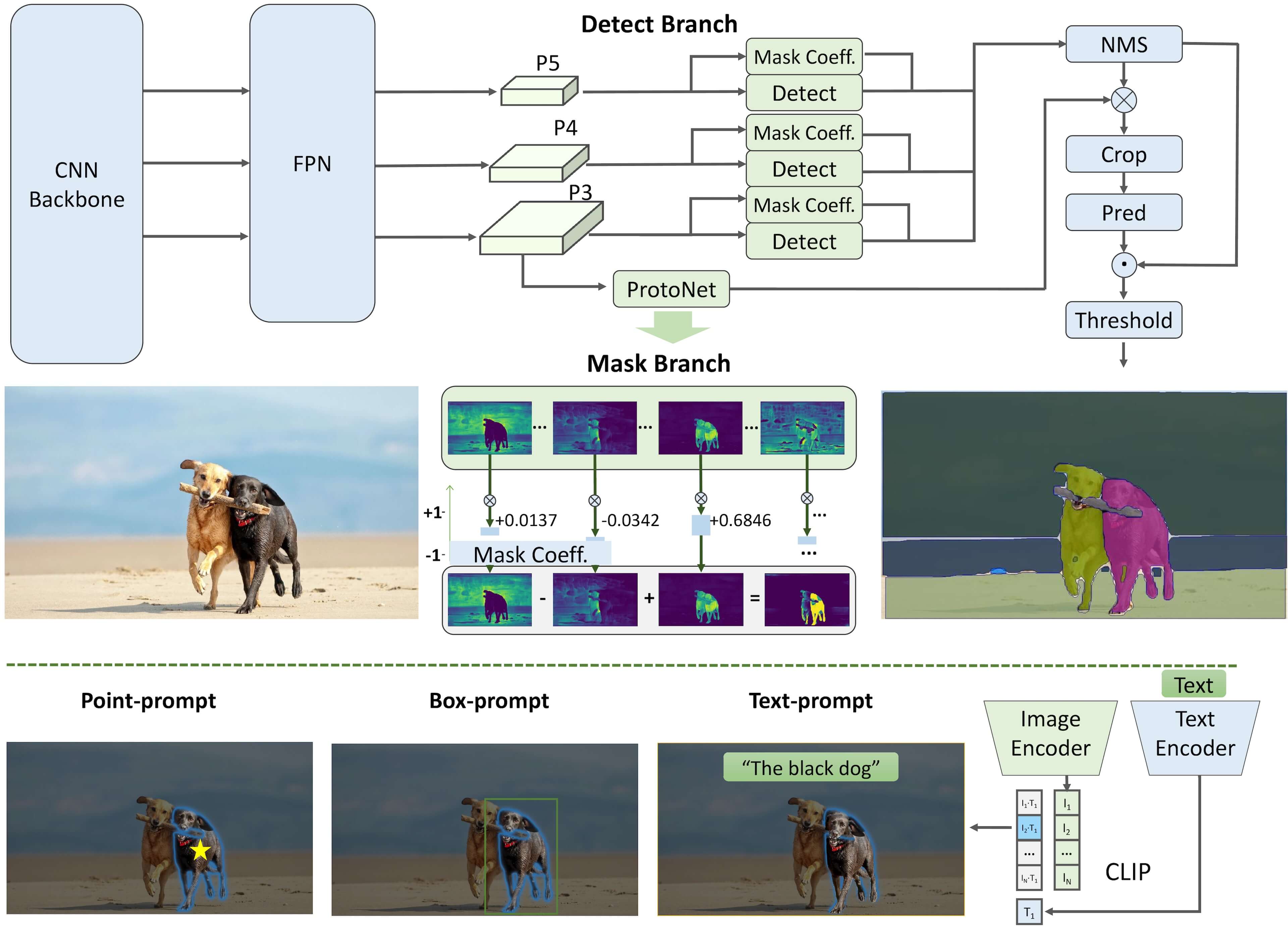

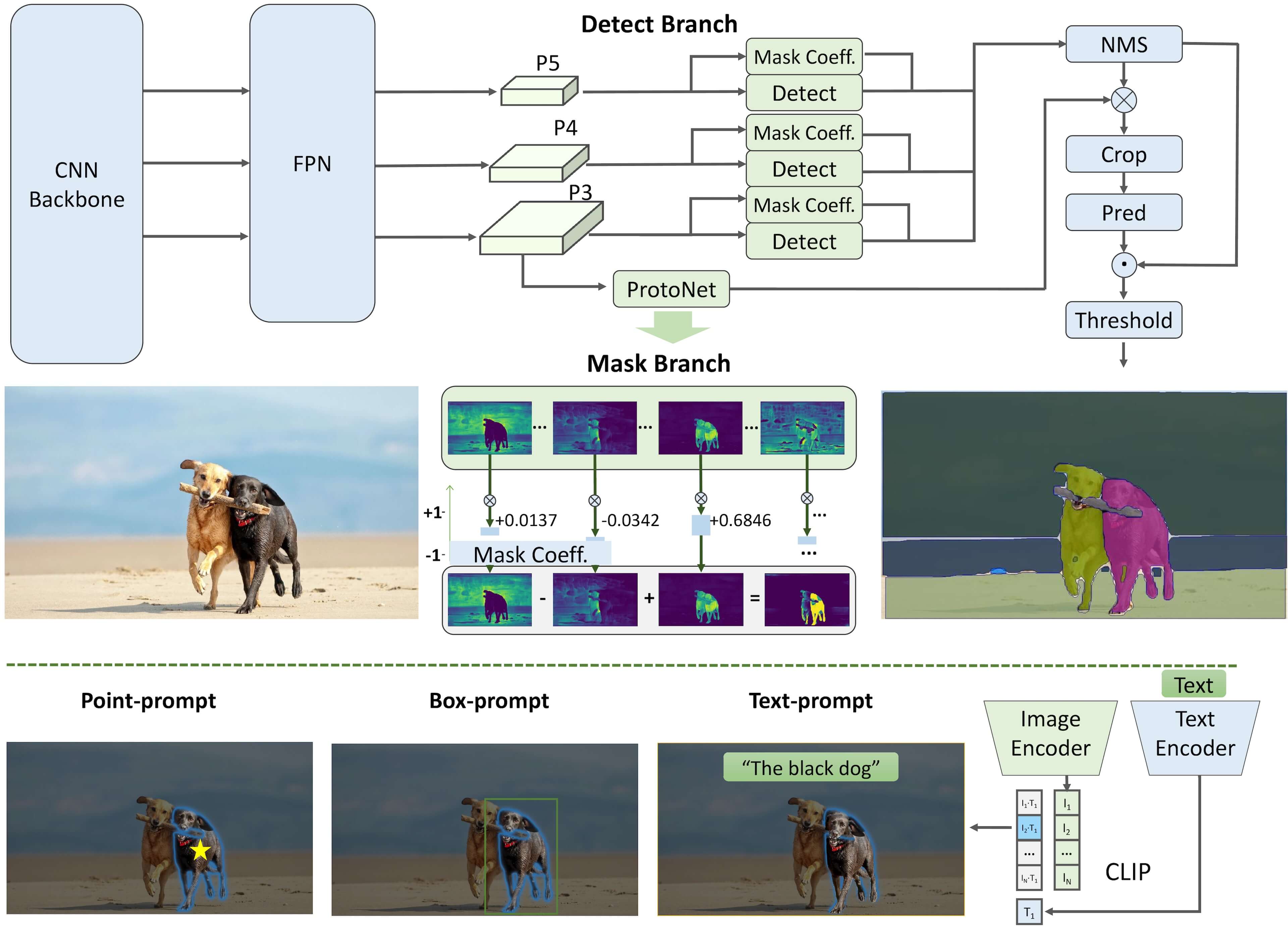

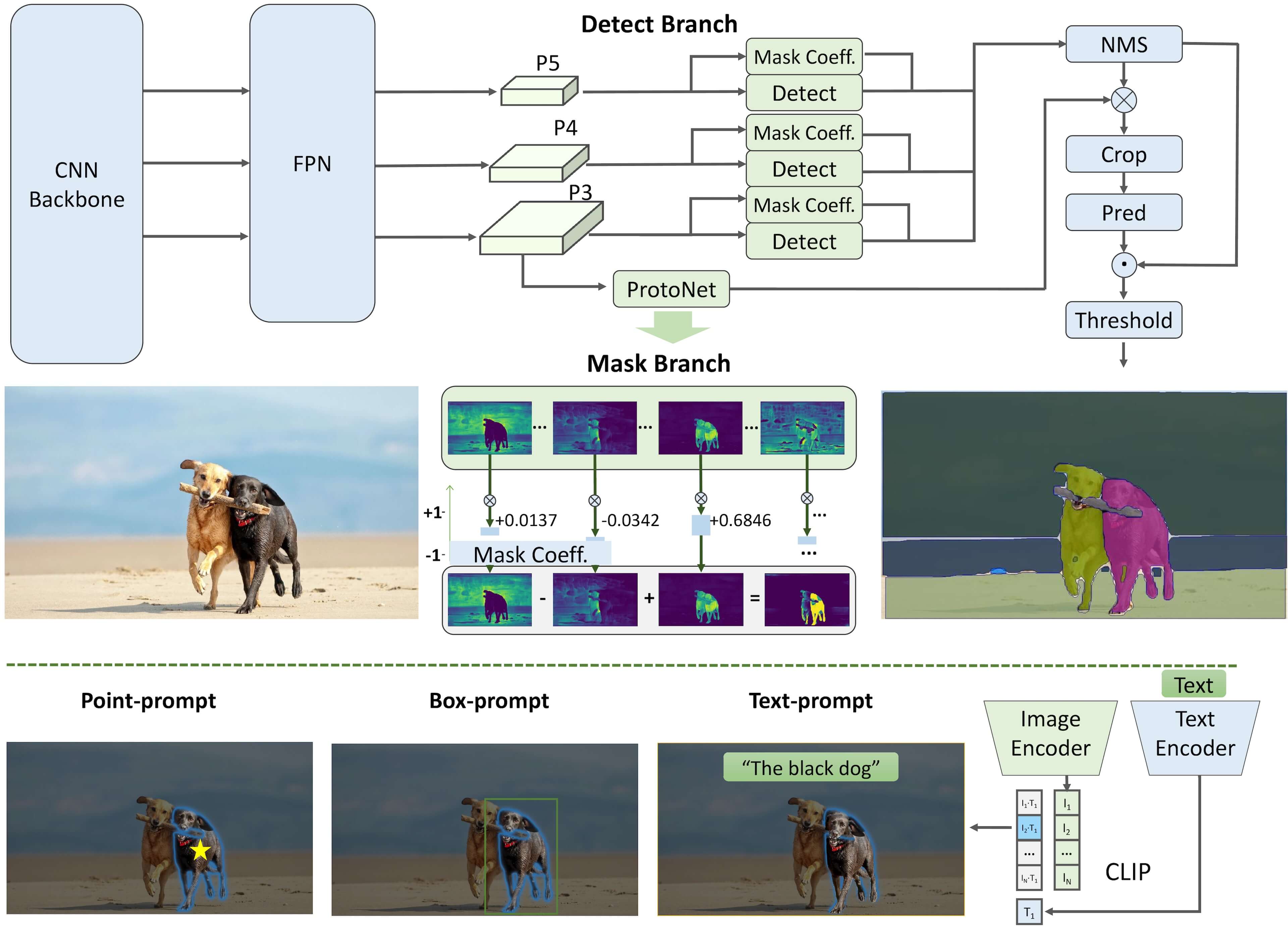

| [SAM - Segment Anything Model](notebooks/237-segment-anything/)

| [Blog - How to get YOLOv8 Over 1000 fps with Intel GPUs?](https://medium.com/openvino-toolkit/how-to-get-yolov8-over-1000-fps-with-intel-gpus-9b0eeee879) |

| [SAM - Segment Anything Model](notebooks/237-segment-anything/)

| Prompt based object segmentation mask generation using Segment Anything and OpenVINO™ |  | [Blog - SAM: Segment Anything Model — Versatile by itself and Faster by OpenVINO](https://medium.com/@paularamos_5416/sam-segment-anything-model-versatile-by-itself-and-faster-by-openvino-50175f06cd24) |

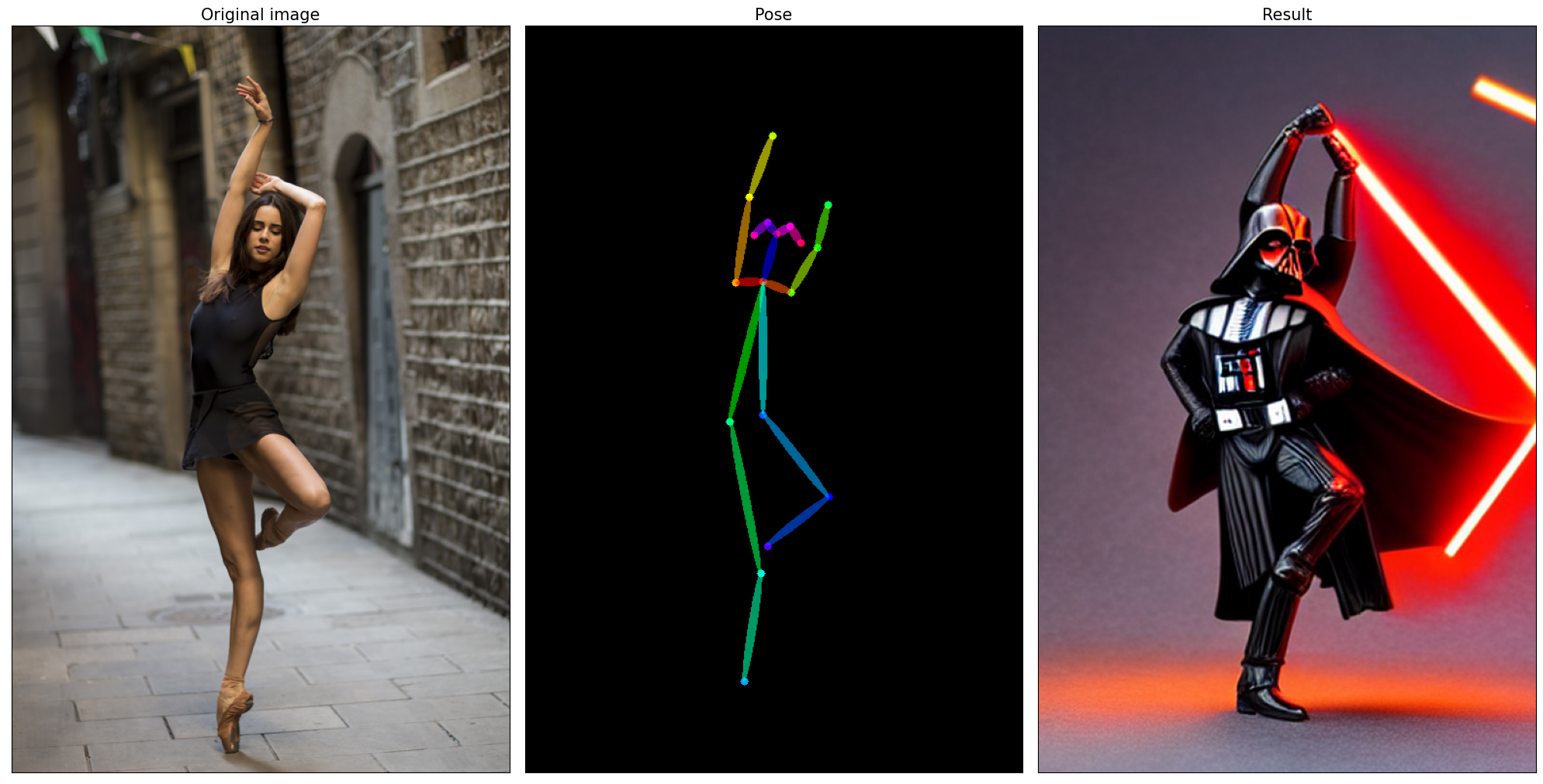

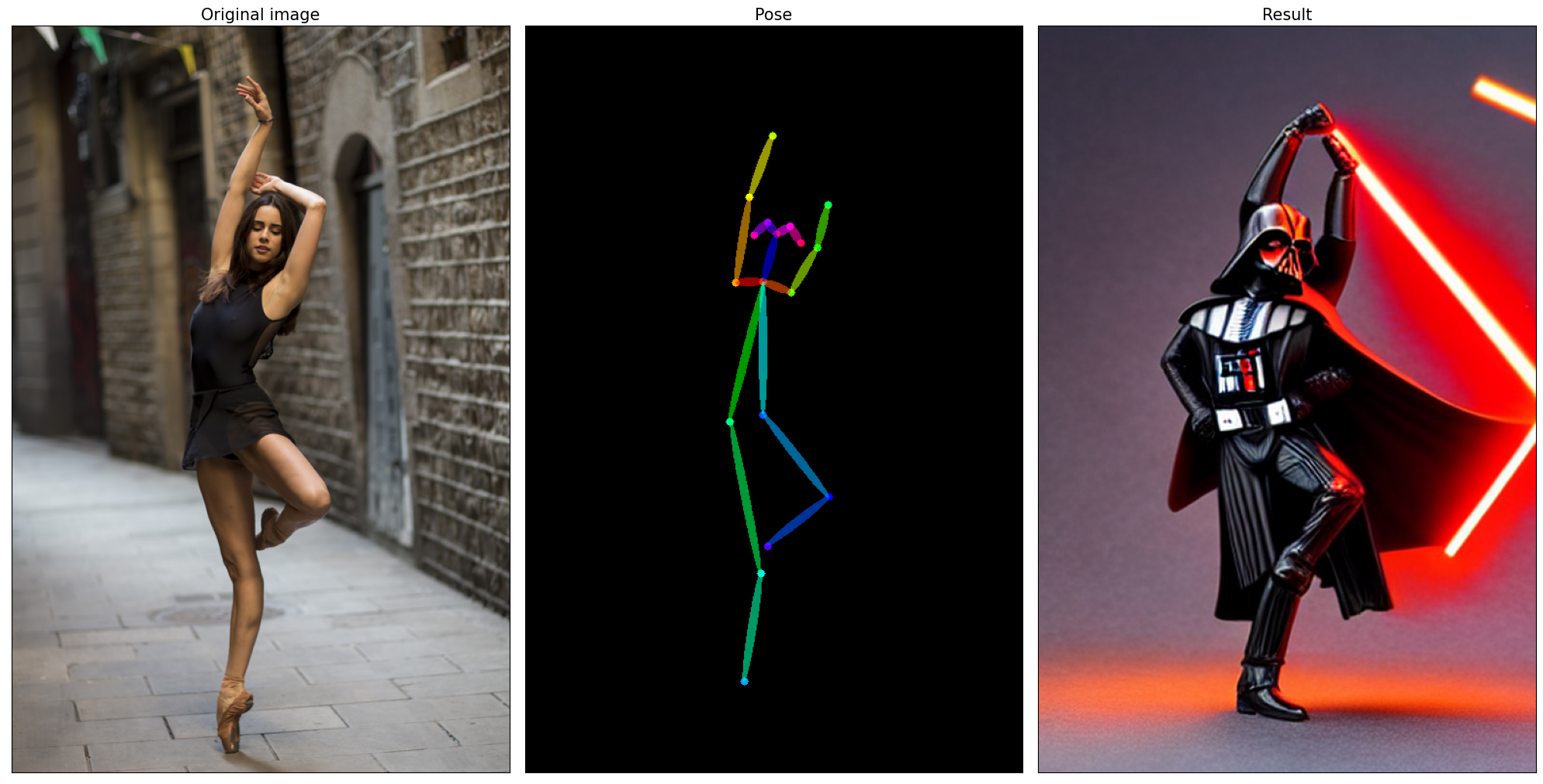

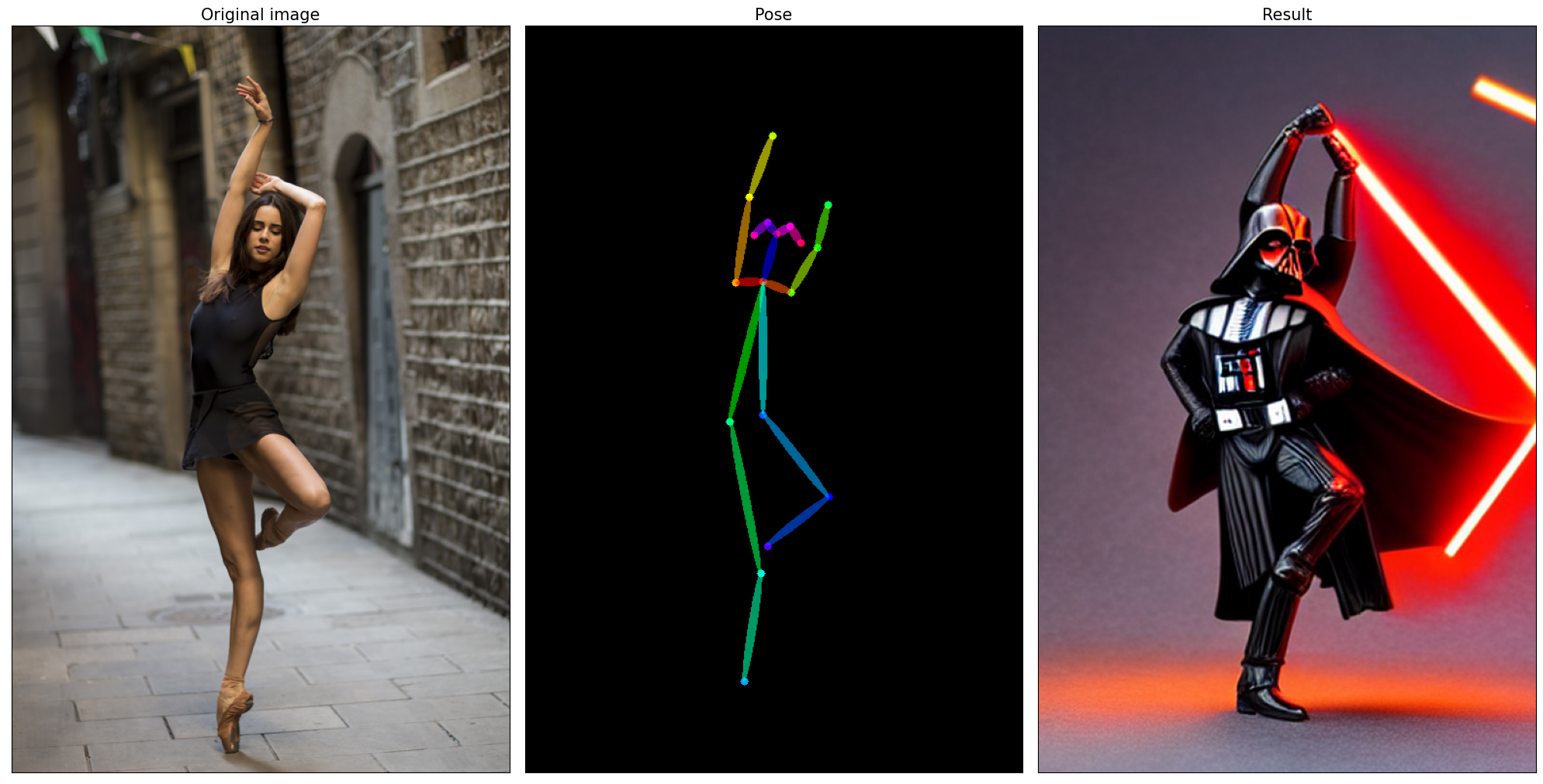

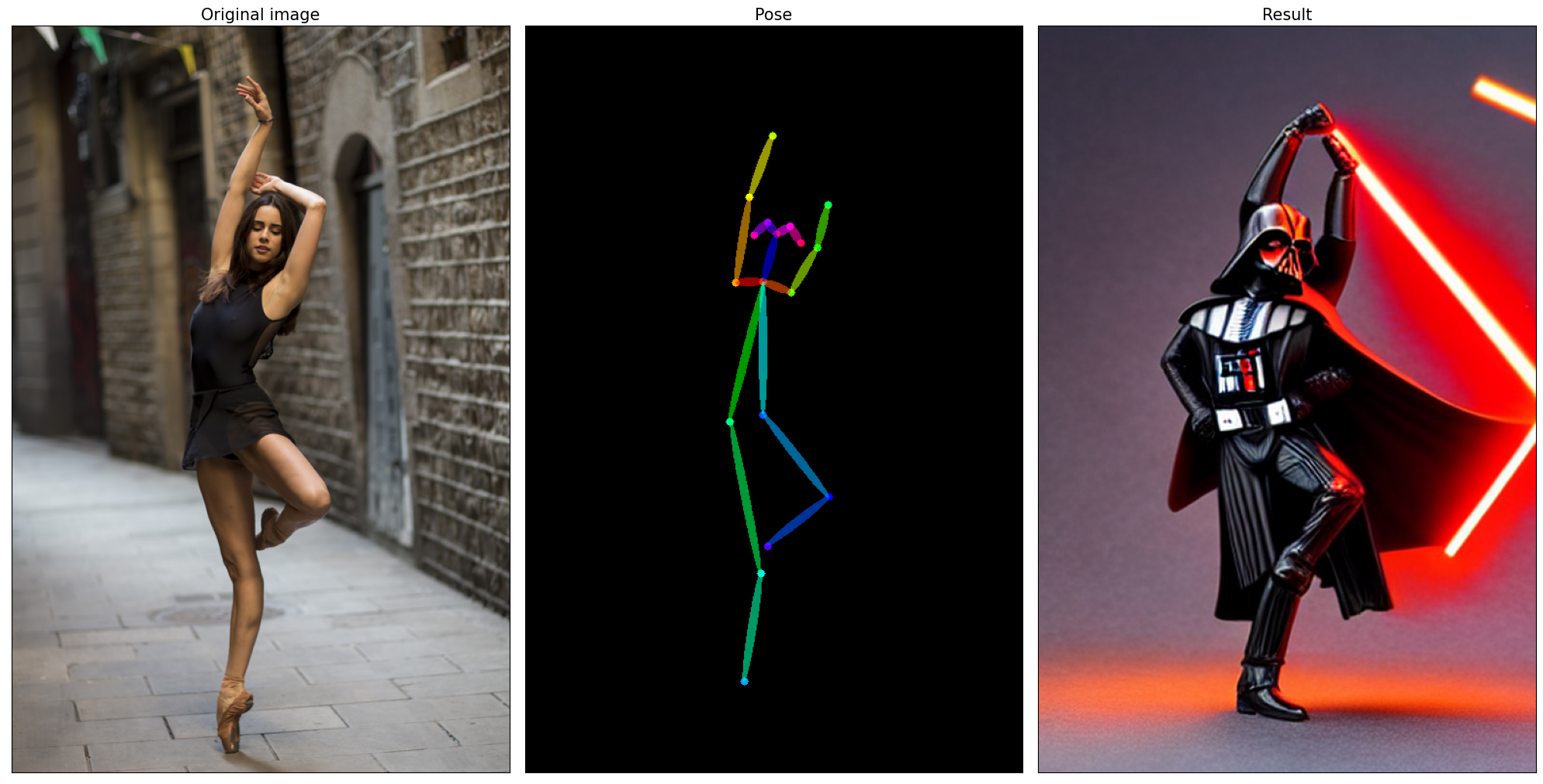

| [ControlNet - Stable-Diffusion](notebooks/235-controlnet-stable-diffusion/)

| [Blog - SAM: Segment Anything Model — Versatile by itself and Faster by OpenVINO](https://medium.com/@paularamos_5416/sam-segment-anything-model-versatile-by-itself-and-faster-by-openvino-50175f06cd24) |

| [ControlNet - Stable-Diffusion](notebooks/235-controlnet-stable-diffusion/)

| A Text-to-Image Generation with ControlNet Conditioning and OpenVINO™ |  | [Blog - Control your Stable Diffusion Model with ControlNet and OpenVINO](https://medium.com/@paularamos_5416/control-your-stable-diffusion-model-with-controlnet-and-openvino-f2aa7e6b1ebd) |

| [Stable Diffusion v2](notebooks/236-stable-diffusion-v2/)

| [Blog - Control your Stable Diffusion Model with ControlNet and OpenVINO](https://medium.com/@paularamos_5416/control-your-stable-diffusion-model-with-controlnet-and-openvino-f2aa7e6b1ebd) |

| [Stable Diffusion v2](notebooks/236-stable-diffusion-v2/)

| Text-to-Image Generation and Infinite Zoom with Stable Diffusion v2 and OpenVINO™ |  | [Blog - How to run Stable Diffusion on Intel GPUs with OpenVINO](https://medium.com/openvino-toolkit/how-to-run-stable-diffusion-on-intel-gpus-with-openvino-840714f122b4) |

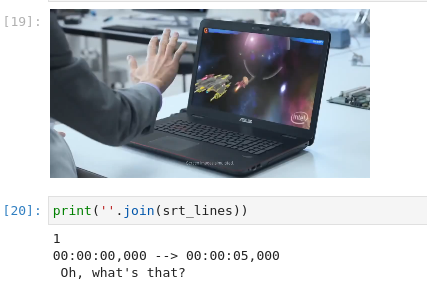

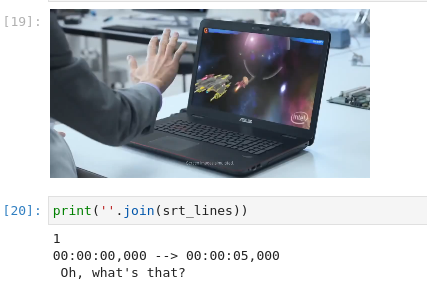

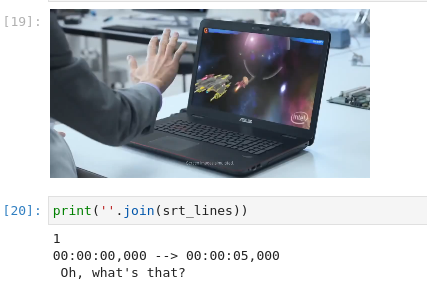

| [Whisper - Subtitles generation](notebooks/227-whisper-subtitles-generation/)

| [Blog - How to run Stable Diffusion on Intel GPUs with OpenVINO](https://medium.com/openvino-toolkit/how-to-run-stable-diffusion-on-intel-gpus-with-openvino-840714f122b4) |

| [Whisper - Subtitles generation](notebooks/227-whisper-subtitles-generation/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/227-whisper-subtitles-generation/227-whisper-convert.ipynb) | Generate subtitles for video with OpenAI Whisper and OpenVINO |  | |

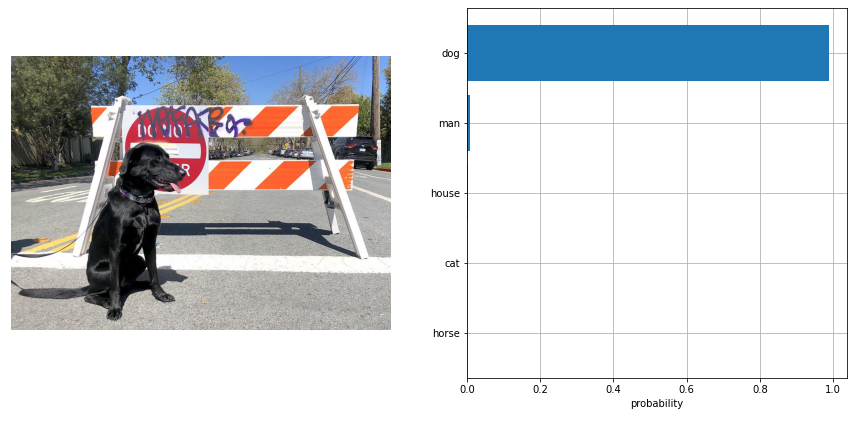

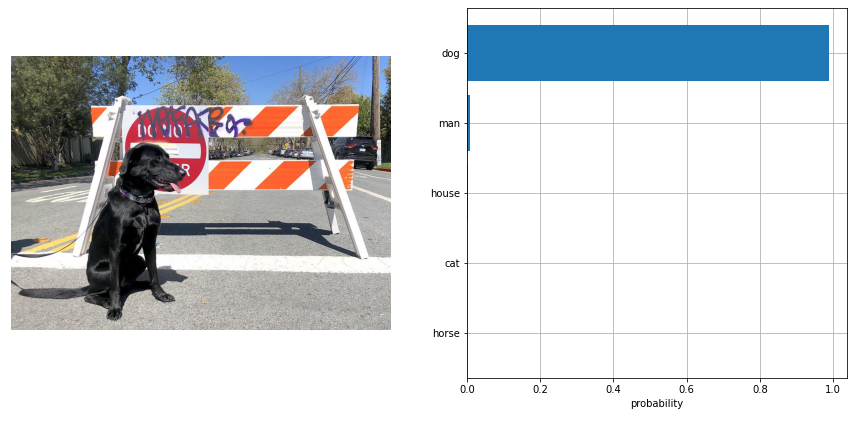

| [CLIP - zero-shot-image-classification](notebooks/228-clip-zero-shot-image-classification)

| |

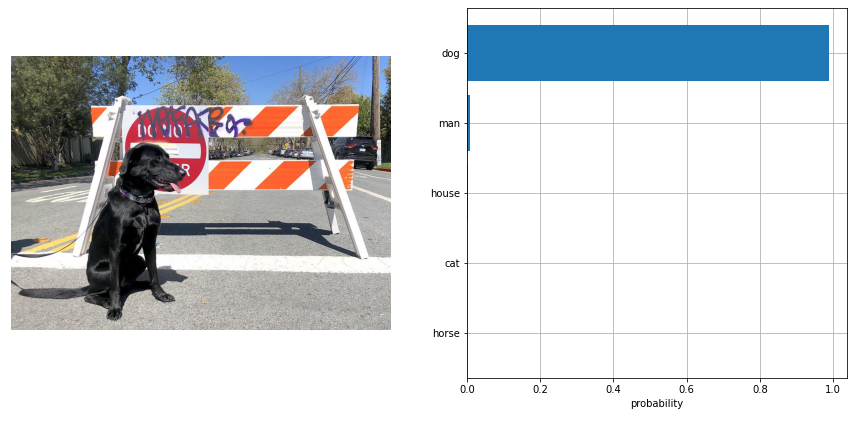

| [CLIP - zero-shot-image-classification](notebooks/228-clip-zero-shot-image-classification)

| Perform Zero-shot Image Classification with CLIP and OpenVINO |  | [Blog - Generative AI and Explainable AI with OpenVINO ](https://medium.com/@paularamos_5416/generative-ai-and-explainable-ai-with-openvino-2b5f8e4e720b#:~:text=pix2pix%2Dimage%2Dediting-,Explainable%20AI%20with%20OpenVINO,-Explainable%20AI%20is) |

| [BLIP - Visual-language-processing](notebooks/233-blip-visual-language-processing/)

| [Blog - Generative AI and Explainable AI with OpenVINO ](https://medium.com/@paularamos_5416/generative-ai-and-explainable-ai-with-openvino-2b5f8e4e720b#:~:text=pix2pix%2Dimage%2Dediting-,Explainable%20AI%20with%20OpenVINO,-Explainable%20AI%20is) |

| [BLIP - Visual-language-processing](notebooks/233-blip-visual-language-processing/)

| Visual Question Answering and Image Captioning using BLIP and OpenVINO™ |  | [Blog - Multimodality with OpenVINO — BLIP](https://medium.com/@paularamos_5416/multimodality-with-openvino-blip-b20bd3a2c87) |

| [Instruct pix2pix - Image-editing](notebooks/231-instruct-pix2pix-image-editing/)

| [Blog - Multimodality with OpenVINO — BLIP](https://medium.com/@paularamos_5416/multimodality-with-openvino-blip-b20bd3a2c87) |

| [Instruct pix2pix - Image-editing](notebooks/231-instruct-pix2pix-image-editing/)

| Image editing with InstructPix2Pix |  | [Blog - Generative AI and Explainable AI with OpenVINO](https://medium.com/@paularamos_5416/generative-ai-and-explainable-ai-with-openvino-2b5f8e4e720b#:~:text=2.-,InstructPix2Pix,-Pix2Pix%20is%20a) |

| [DeepFloyd IF - Text-to-Image generation](notebooks/238-deepfloyd-if/)

| [Blog - Generative AI and Explainable AI with OpenVINO](https://medium.com/@paularamos_5416/generative-ai-and-explainable-ai-with-openvino-2b5f8e4e720b#:~:text=2.-,InstructPix2Pix,-Pix2Pix%20is%20a) |

| [DeepFloyd IF - Text-to-Image generation](notebooks/238-deepfloyd-if/)

| Text-to-Image Generation with DeepFloyd IF and OpenVINO™ |  | |

| [ImageBind](notebooks/239-image-bind/)

| |

| [ImageBind](notebooks/239-image-bind/)

| Binding multimodal data using ImageBind and OpenVINO™ |  | |

| [Dolly v2](notebooks/240-dolly-2-instruction-following/)

| |

| [Dolly v2](notebooks/240-dolly-2-instruction-following/)

| Instruction following using Databricks Dolly 2.0 and OpenVINO™ |  | |

| [Stable Diffusion XL and Segmind Stable Diffusion 1B (SSD-1B)](notebooks/248-stable-diffusion-xl/)

| |

| [Stable Diffusion XL and Segmind Stable Diffusion 1B (SSD-1B)](notebooks/248-stable-diffusion-xl/)

| Image generation with Stable Diffusion XL and Segmind Stable Diffusion 1B (SSD-1B) and OpenVINO™ |  | |

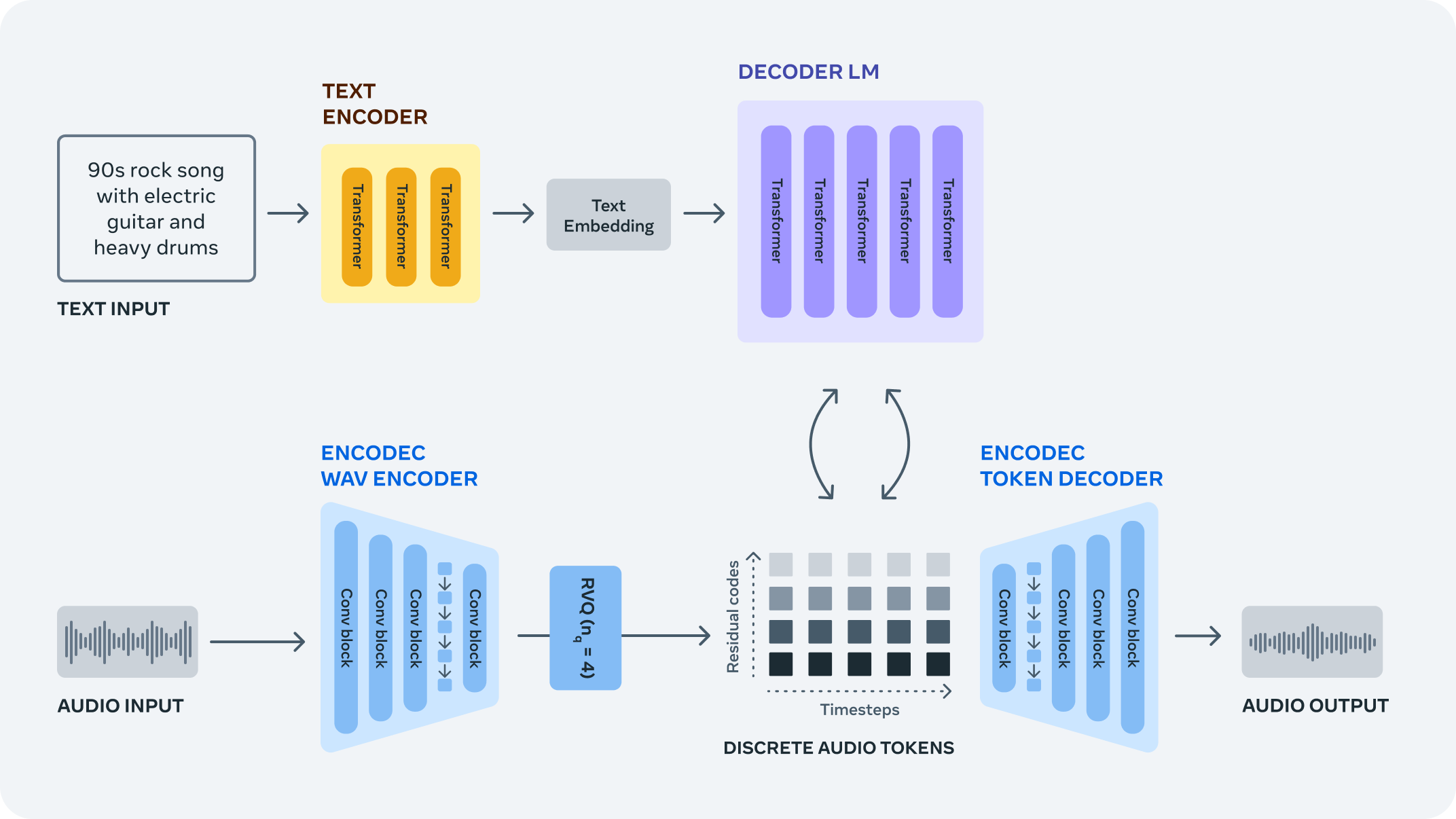

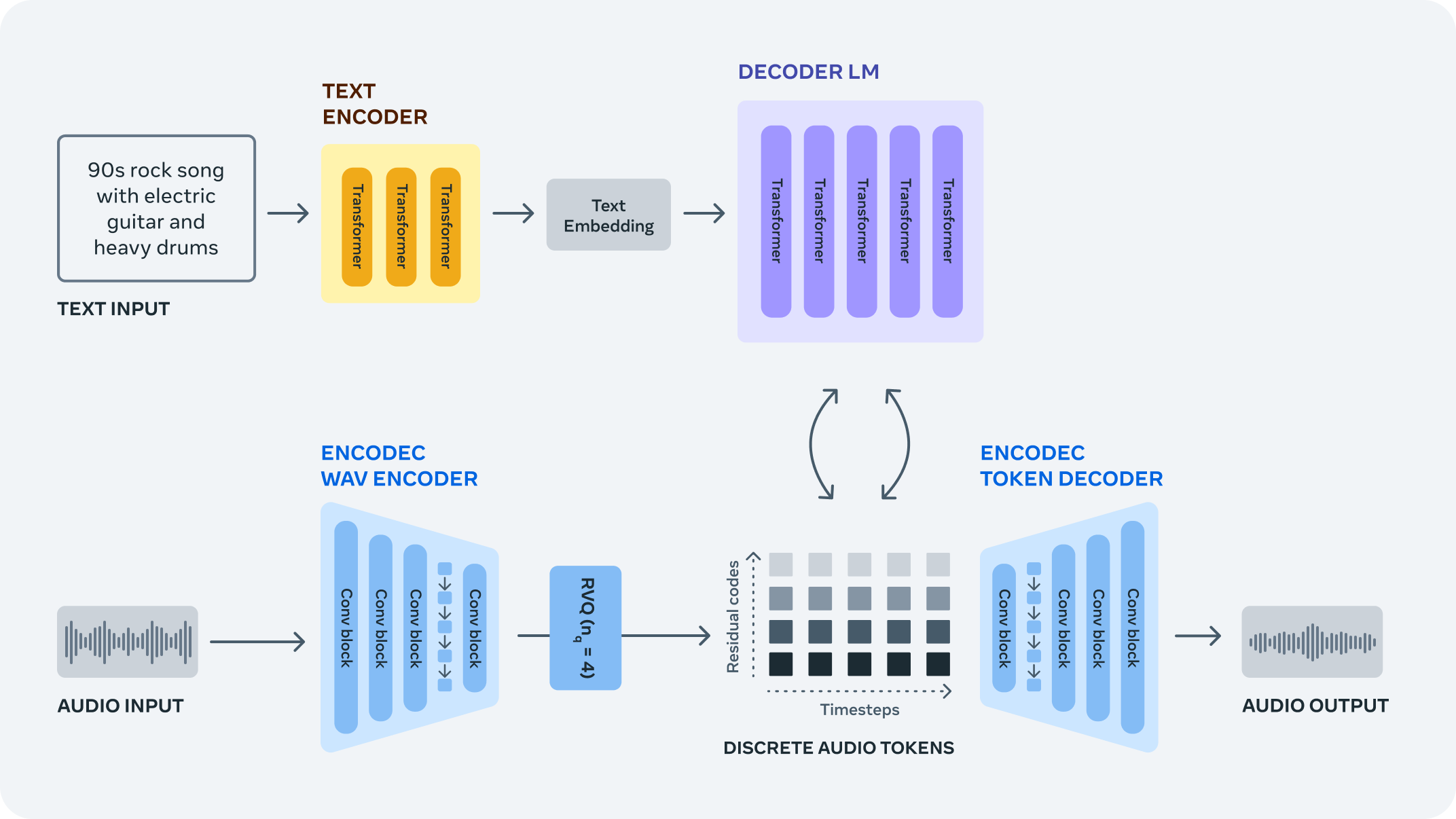

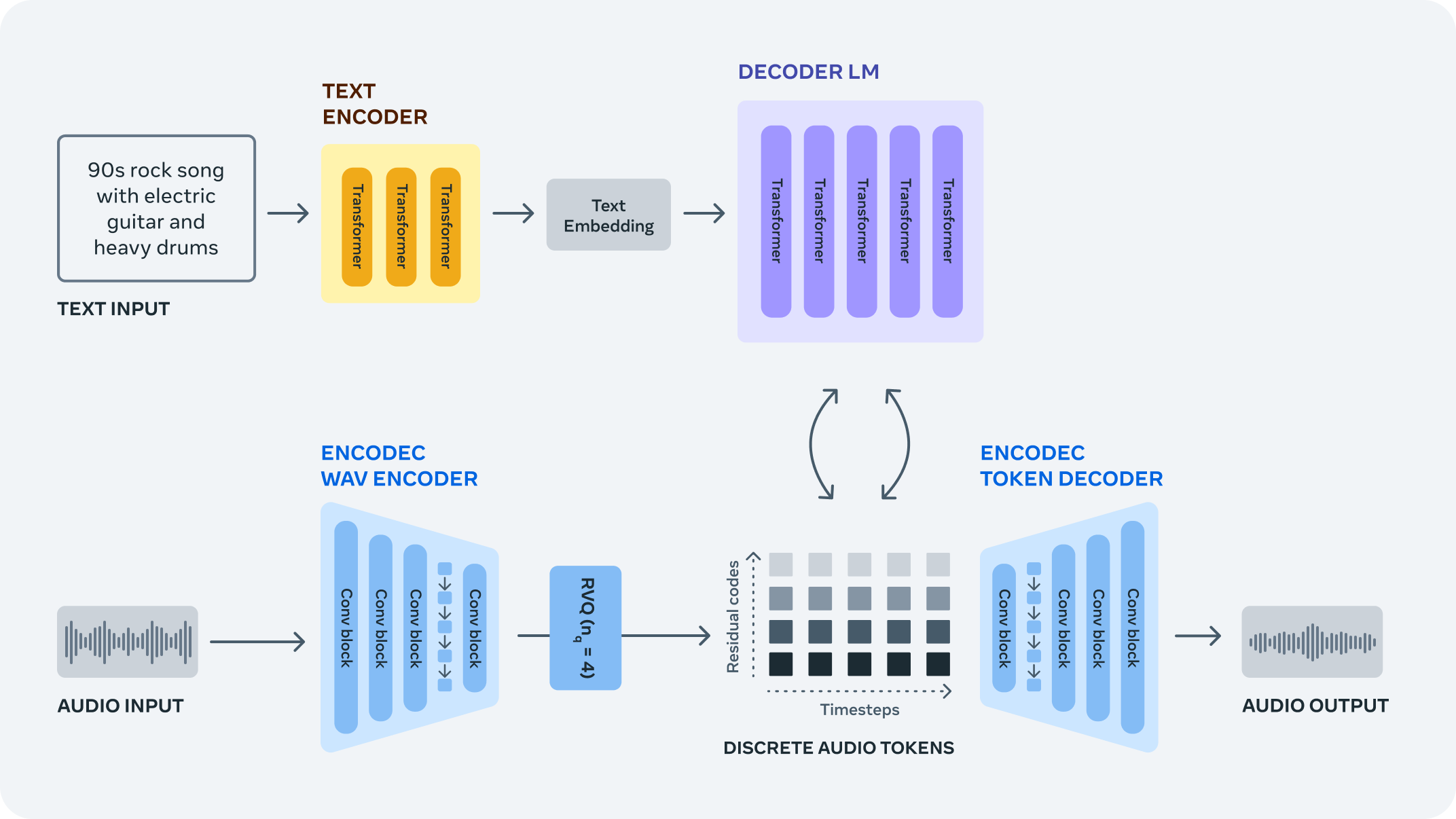

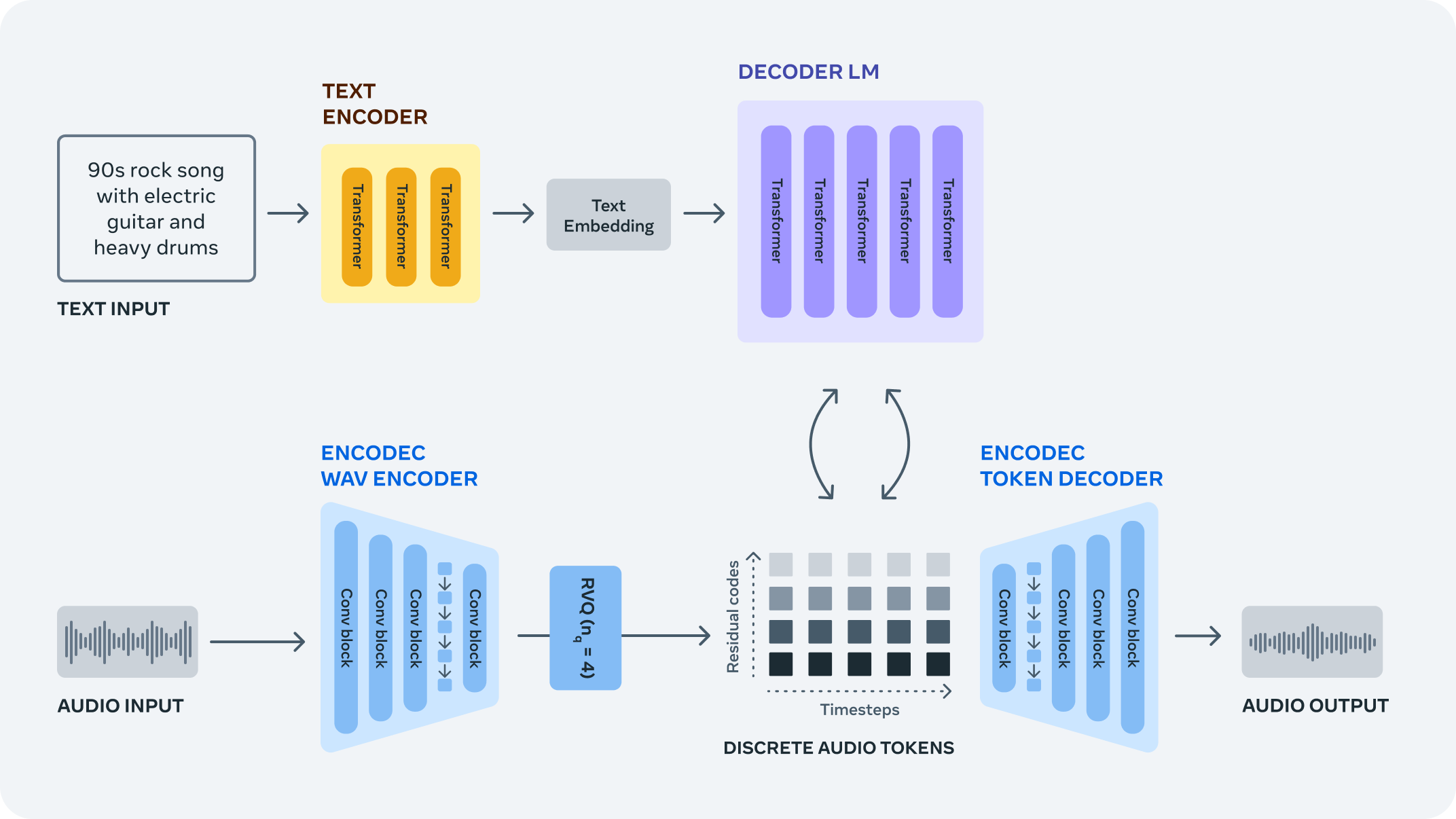

| [MusicGen](notebooks/250-music-generation/)

| |

| [MusicGen](notebooks/250-music-generation/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F250-music-generation%2F250-music-generation.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/250-music-generation/250-music-generation.ipynb) | Controllable Music Generation with MusicGen and OpenVINO™ |  |

| [Tiny SD](notebooks/251-tiny-sd-image-generation/)

|

| [Tiny SD](notebooks/251-tiny-sd-image-generation/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/251-tiny-sd-image-generation/251-tiny-sd-image-generation.ipynb) | Image Generation with Tiny-SD and OpenVINO™ |  | |

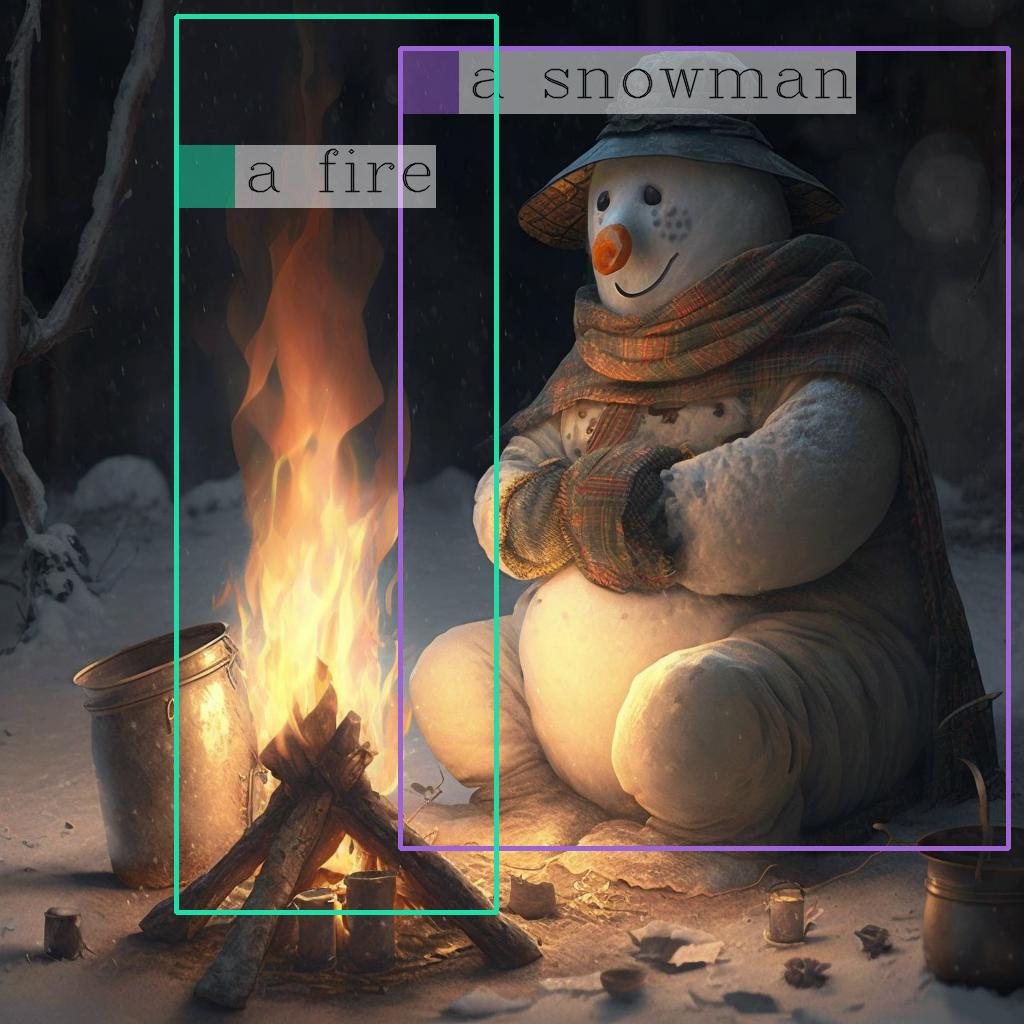

| [ZeroScope Text-to-video synthesis](notebooks/253-zeroscope-text2video)

| |

| [ZeroScope Text-to-video synthesis](notebooks/253-zeroscope-text2video)

| Text-to-video synthesis with ZeroScope and OpenVINO™ | A panda eating bamboo on a rock  |

| [LLM chatbot](notebooks/254-llm-chatbot)

|

| [LLM chatbot](notebooks/254-llm-chatbot)

| Create LLM-powered Chatbot using OpenVINO™ |  |

| [QA over Document](notebooks/254-llm-chatbot)

|

| [QA over Document](notebooks/254-llm-chatbot)

| Create LLM-powered RAG system using OpenVINO™ and LangChain |  |

| [Bark Text-to-Speech](notebooks/256-bark-text-to-audio/)

|

| [Bark Text-to-Speech](notebooks/256-bark-text-to-audio/)

| Text-to-Speech generation using Bark and OpenVINO™ |  | [LLaVA Multimodal Chatbot](notebooks/257-llava-multimodal-chatbot/)

| [LLaVA Multimodal Chatbot](notebooks/257-llava-multimodal-chatbot/)

| Visual-language assistant with LLaVA and OpenVINO™ |  | [BLIP-Diffusion - Subject-Driven Generation](notebooks/258-blip-diffusion-subject-generation)

| [BLIP-Diffusion - Subject-Driven Generation](notebooks/258-blip-diffusion-subject-generation)

| Subject-driven image generation and editing using BLIP Diffusion and OpenVINO™ |  | [DeciDiffusion](notebooks/259-decidiffusion-image-generation/)

| [DeciDiffusion](notebooks/259-decidiffusion-image-generation/)

| Image generation with DeciDiffusion and OpenVINO™ |  |

| [Fast Segment Anything](notebooks/261-fast-segment-anything/)

|

| [Fast Segment Anything](notebooks/261-fast-segment-anything/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F261-fast-segment-anything%2F261-fast-segment-anything.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/261-fast-segment-anything/261-fast-segment-anything.ipynb) | Object segmentations with FastSAM and OpenVINO™ |  |

| [SoftVC VITS Singing Voice Conversion](notebooks/262-softvc-voice-conversion)

|

| [SoftVC VITS Singing Voice Conversion](notebooks/262-softvc-voice-conversion)

| SoftVC VITS Singing Voice Conversion and OpenVINO™ | |

| [Latent Consistency Models: the next generation of Image Generation models ](notebooks/263-latent-consistency-models-image-generation)

| Image generation with Latent Consistency Models (LCM) and OpenVINO™ |  |

| [Speedup ControlNet pipeline with LCM LoRA](notebooks/263-latent-consistency-models-image-generation)

|

| [Speedup ControlNet pipeline with LCM LoRA](notebooks/263-latent-consistency-models-image-generation)

| Text-to-Image Generation with LCM LoRA and ControlNet Conditioning |  |

| [QR Code Monster](notebooks/264-qrcode-monster/)

|

| [QR Code Monster](notebooks/264-qrcode-monster/)

| Generate creative QR codes with ControlNet QR Code Monster and OpenVINO™ |  |

| [Würstchen](notebooks/265-wuerstchen-image-generation)

|

| [Würstchen](notebooks/265-wuerstchen-image-generation)

| Text-to-image generation with Würstchen and OpenVINO™ |  | |

| [Distil-Whisper](notebooks/267-distil-whisper-asr)

| |

| [Distil-Whisper](notebooks/267-distil-whisper-asr)

| Automatic speech recognition using Distil-Whisper and OpenVINO™ | | |

| [FILM](notebooks/269-film-slowmo)

| Frame interpolation with FILM and OpenVINO™ |  |

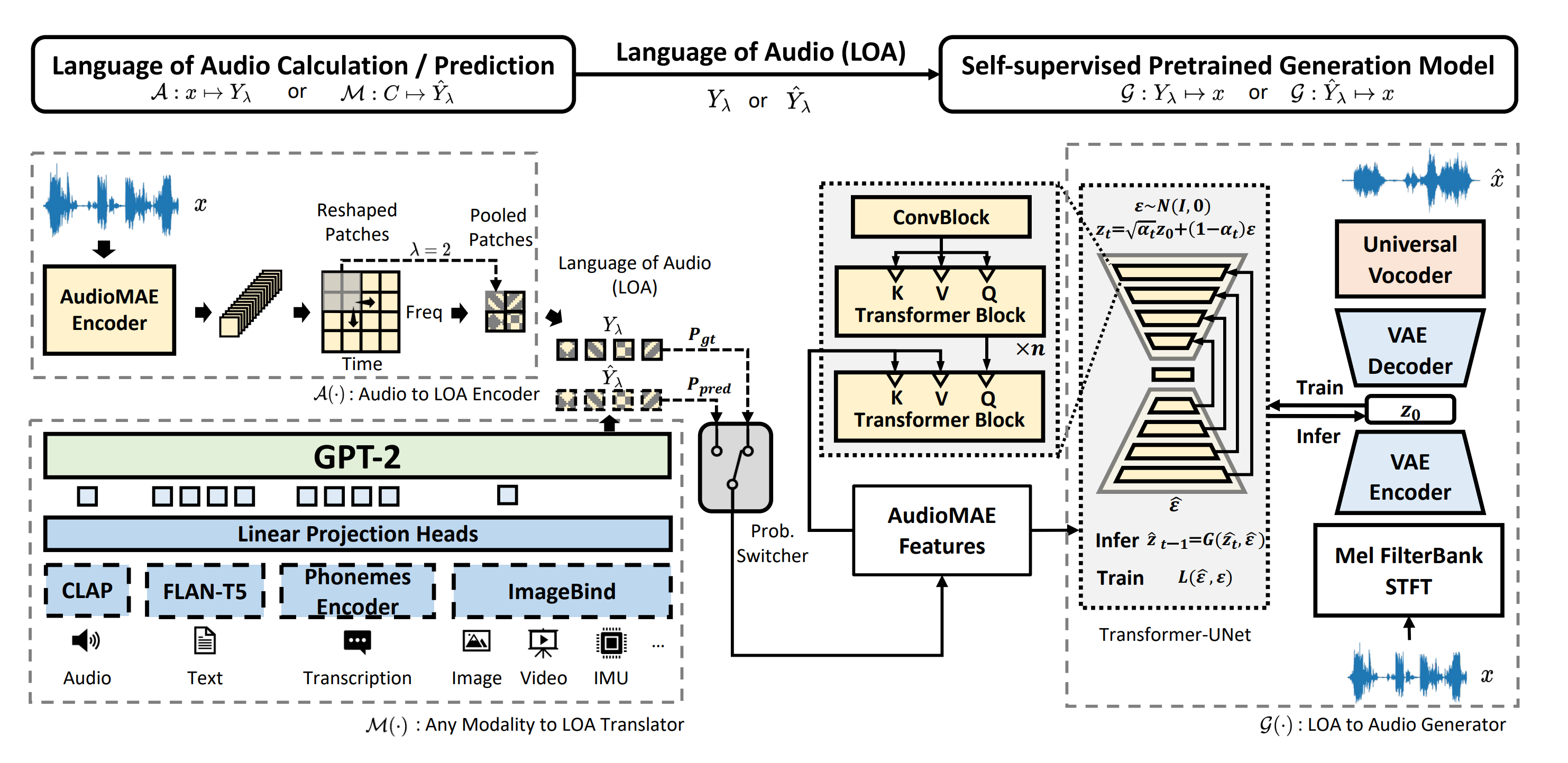

| [Audio LDM 2](notebooks/270-sound-generation-audioldm2/)

|

| [Audio LDM 2](notebooks/270-sound-generation-audioldm2/)

| Sound Generation with AudioLDM2 and OpenVINO™ |  |

| [SDXL-Turbo](notebooks/271-sdxl-turbo/)

|

| [SDXL-Turbo](notebooks/271-sdxl-turbo/)

| Single-step image generation using SDXL-turbo and OpenVINO |  |

| [Stable-Zephyr chatbot](notebooks/273-stable-zephyr-3b-chatbot/)

|

| [Stable-Zephyr chatbot](notebooks/273-stable-zephyr-3b-chatbot/)

| Use Stable-Zephyr as chatbot assistant with OpenVINO |  |

| [Efficient-SAM](notebooks/274-efficient-sam)

|

| [Efficient-SAM](notebooks/274-efficient-sam)

| Object segmentation with EfficientSAM and OpenVINO |  |

| [LLM Instruction following pipeline](notebooks/275-llm-question-answering)

|

| [LLM Instruction following pipeline](notebooks/275-llm-question-answering)

| Usage variety of LLM models for answering questions using OpenVINO |  |

|[Stable Diffusion with IP-Adapter](notebooks/278-stable-diffusion-ip-adapter)

|

|[Stable Diffusion with IP-Adapter](notebooks/278-stable-diffusion-ip-adapter)

| Image conditioning in Stable Diffusion pipeline using IP-Adapter |  |

| [MobileVLM](notebooks/279-mobilevlm-language-assistant)

|

| [MobileVLM](notebooks/279-mobilevlm-language-assistant)

| Mobile language assistant with MobileVLM and OpenVINO | |

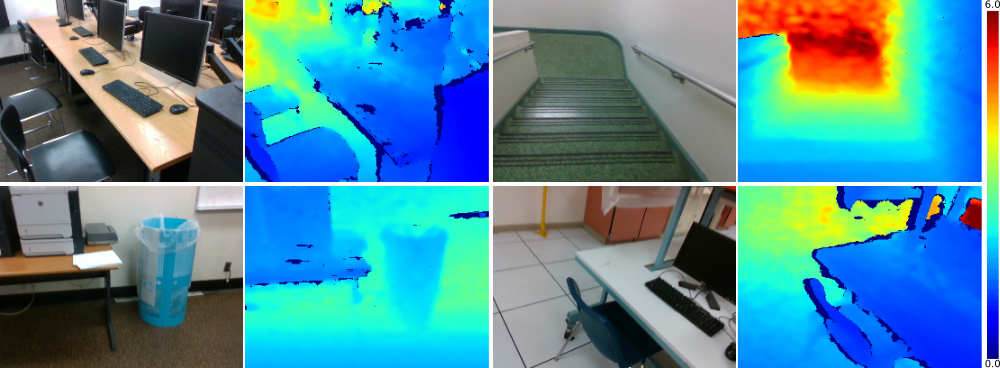

| [DepthAnything](notebooks/280-depth-anything)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F280-depth-anythingh%2F280-depth-anything.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/280-depth-anything/280-depth-anything.ipynb) | Monocular Depth estimation with DepthAnything and OpenVINO |  |

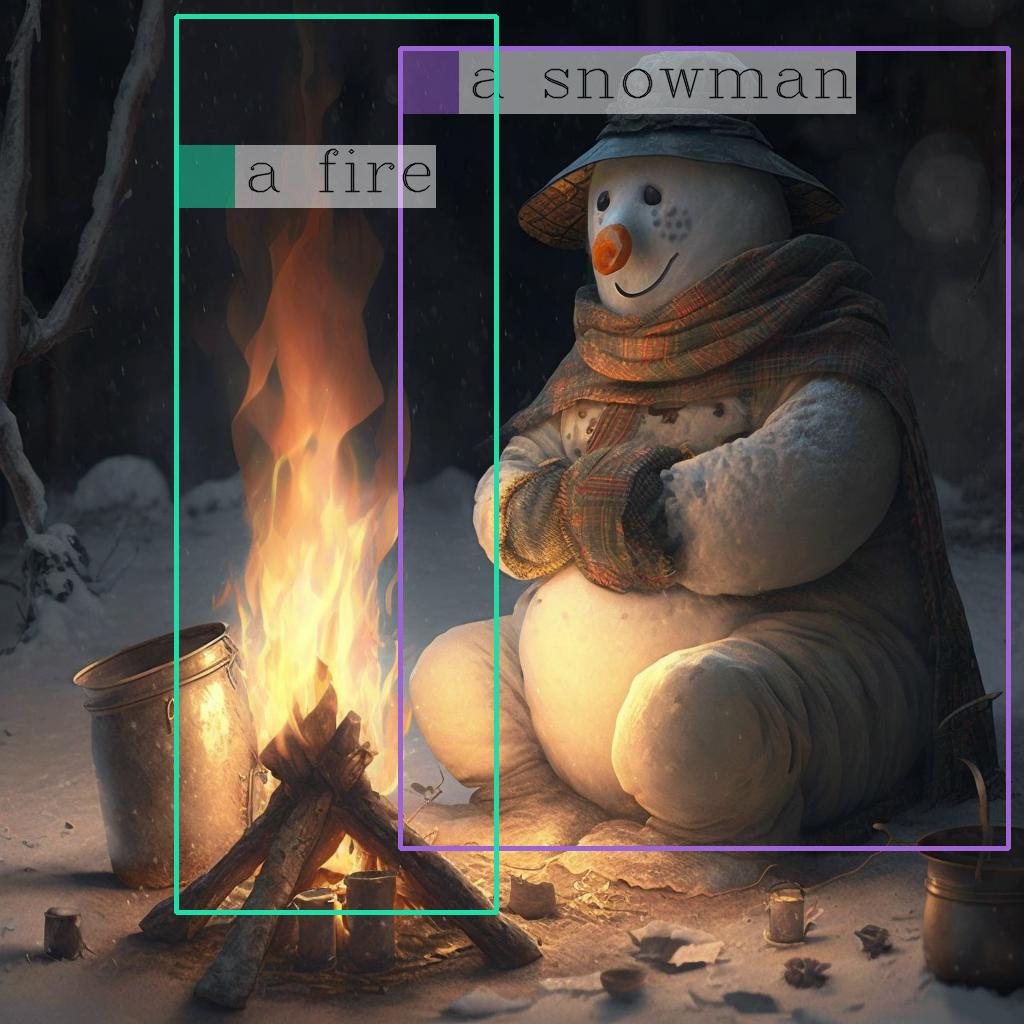

| [Kosmos-2: Grounding Multimodal Large Language Models](notebooks/281-kosmos2-multimodal-large-language-model)

|

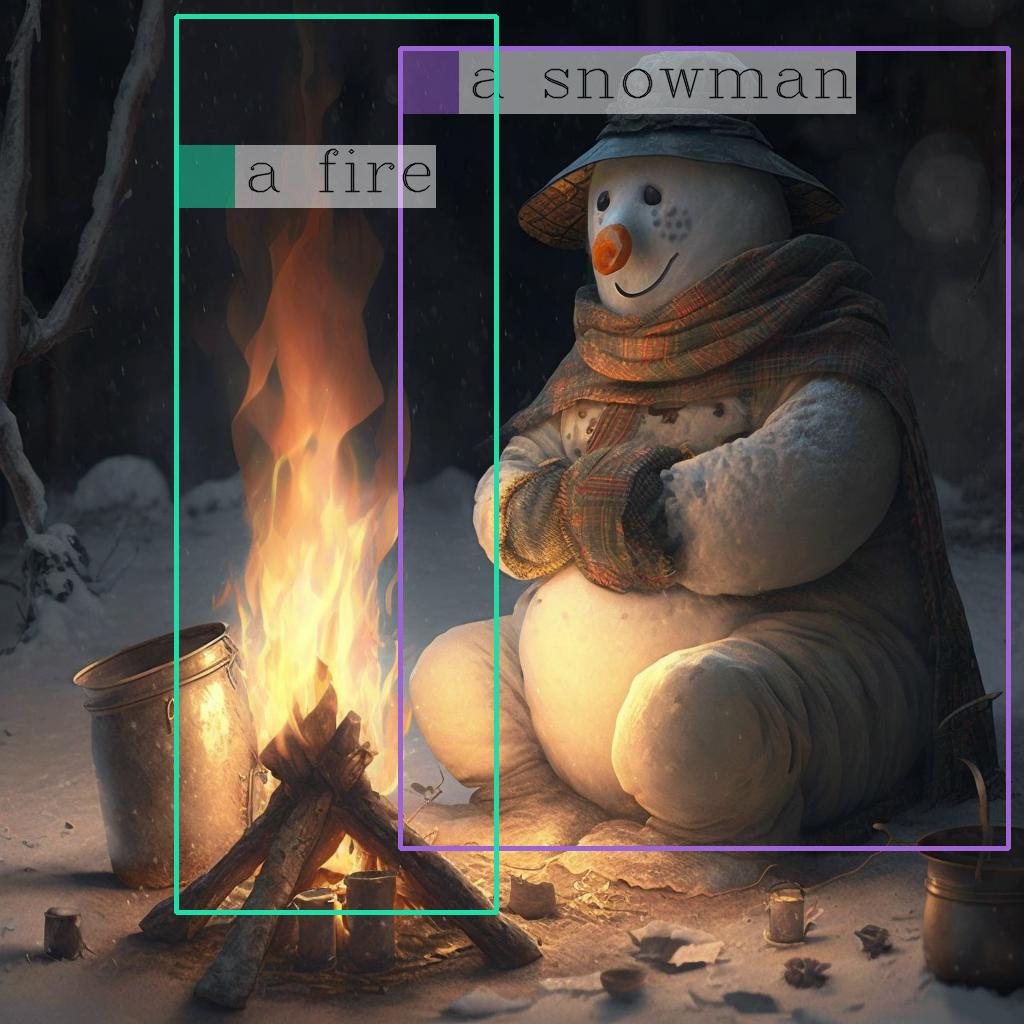

| [Kosmos-2: Grounding Multimodal Large Language Models](notebooks/281-kosmos2-multimodal-large-language-model)

| Kosmos-2: Grounding Multimodal Large Language Model and OpenVINO™ |  |

| [PhotoMaker](notebooks/283-photo-maker)

|

| [PhotoMaker](notebooks/283-photo-maker)

| Text-to-image generation using PhotoMaker and OpenVINO |  |

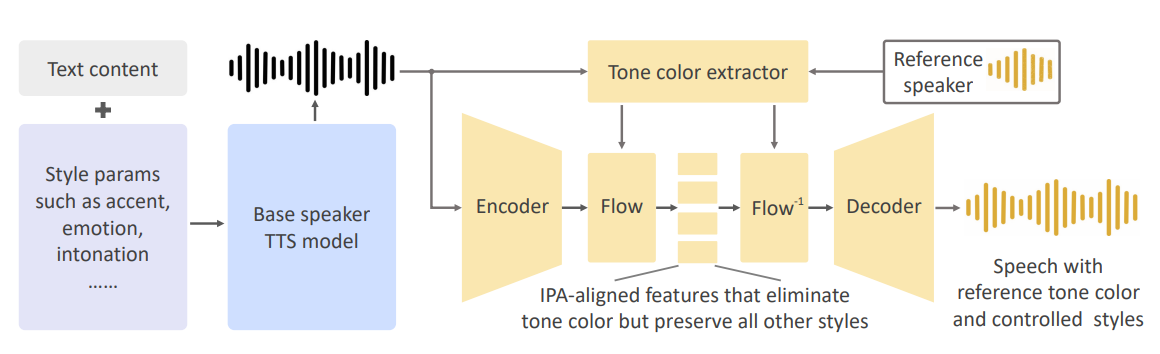

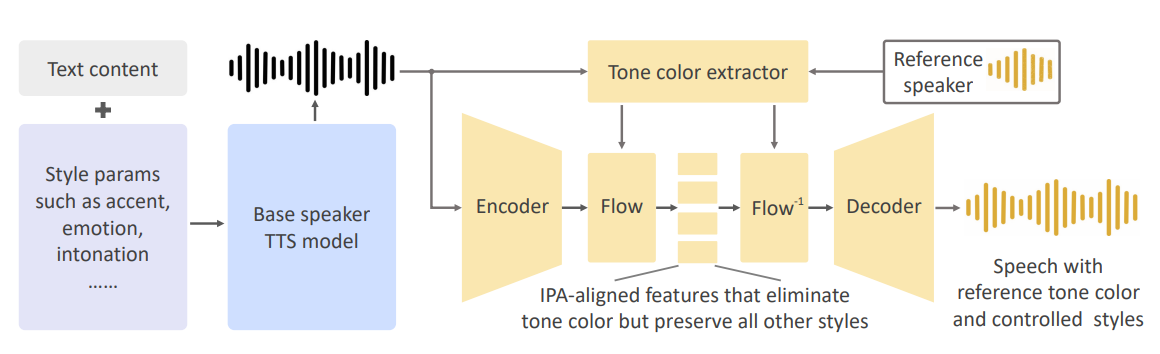

| [OpenVoice](notebooks/284-openvoice)

|

| [OpenVoice](notebooks/284-openvoice)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F284-openvoice%2F284-openvoice.ipynb)[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/284-openvoice/284-openvoice.ipynb) | OpenVoice a versatile instant voice tone transferring and generating speech in various languages. | |

| [InstantID](notebooks/286-instant-id)

|

| [InstantID](notebooks/286-instant-id)

| InstantID: Zero-shot Identity-Preserving Image Generation using OpenVINO|  | |

| [YOLOv9 Optimization](notebooks/287-yolov9-optimization)

| |

| [YOLOv9 Optimization](notebooks/287-yolov9-optimization)

| Optimize YOLOv9 using NNCF |  | |

## Table of Contents

- [🚀 AI Trends - Notebooks](#-ai-trends---notebooks)

- [Table of Contents](#table-of-contents)

- [📝 Installation Guide](#-installation-guide)

- [🚀 Getting Started](#-getting-started)

- [💻 First steps](#-first-steps)

- [⌚ Convert \& Optimize](#-convert--optimize)

- [🎯 Model Demos](#-model-demos)

- [🏃 Model Training](#-model-training)

- [📺 Live Demos](#-live-demos)

- [⚙️ System Requirements](#️-system-requirements)

- [💻 Run the Notebooks](#-run-the-notebooks)

- [To Launch a Single Notebook](#to-launch-a-single-notebook)

- [To Launch all Notebooks](#to-launch-all-notebooks)

- [🧹 Cleaning Up](#-cleaning-up)

- [⚠️ Troubleshooting](#️-troubleshooting)

- [🧑💻 Contributors](#-contributors)

- [❓ FAQ](#-faq)

[]()

## 📝 Installation Guide

OpenVINO Notebooks require Python and Git. To get started, select the guide for your operating system or environment:

| [Windows](https://github.com/openvinotoolkit/openvino_notebooks/wiki/Windows) | [Ubuntu](https://github.com/openvinotoolkit/openvino_notebooks/wiki/Ubuntu) | [macOS](https://github.com/openvinotoolkit/openvino_notebooks/wiki/macOS) | [Red Hat](https://github.com/openvinotoolkit/openvino_notebooks/wiki/Red-Hat-and-CentOS) | [CentOS](https://github.com/openvinotoolkit/openvino_notebooks/wiki/Red-Hat-and-CentOS) | [Azure ML](https://github.com/openvinotoolkit/openvino_notebooks/wiki/AzureML) | [Docker](https://github.com/openvinotoolkit/openvino_notebooks/wiki/Docker) | [Amazon SageMaker](https://github.com/openvinotoolkit/openvino_notebooks/wiki/SageMaker) |

| ----------------------------------------------------------------------------- | --------------------------------------------------------------------------- | ------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------- | --------------------------------------------------------------------------------------- | ------------------------------------------------------------------------------ | --------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------- |

[]()

## 🚀 Getting Started

The Jupyter notebooks are categorized into four classes, select one related to your needs or give them all a try. Good Luck!

**NOTE: The main branch of this repository was updated to support the new OpenVINO 2023.3 release.** To upgrade to the new release version, please run `pip install --upgrade -r requirements.txt` in your `openvino_env` virtual environment. If you need to install for the first time, see the [Installation Guide](#-installation-guide) section below. If you wish to use the previous release version of OpenVINO, please checkout the [2023.2 branch](https://github.com/openvinotoolkit/openvino_notebooks/tree/2023.2). If you wish to use the previous Long Term Support (LTS) version of OpenVINO check out the [2022.3 branch](https://github.com/openvinotoolkit/openvino_notebooks/tree/2022.3).

If you need help, please start a GitHub [Discussion](https://github.com/openvinotoolkit/openvino_notebooks/discussions).

### 💻 First steps

Brief tutorials that demonstrate how to use OpenVINO's Python API for inference.

| [001-hello-world](notebooks/001-hello-world/)

| |

## Table of Contents

- [🚀 AI Trends - Notebooks](#-ai-trends---notebooks)

- [Table of Contents](#table-of-contents)

- [📝 Installation Guide](#-installation-guide)

- [🚀 Getting Started](#-getting-started)

- [💻 First steps](#-first-steps)

- [⌚ Convert \& Optimize](#-convert--optimize)

- [🎯 Model Demos](#-model-demos)

- [🏃 Model Training](#-model-training)

- [📺 Live Demos](#-live-demos)

- [⚙️ System Requirements](#️-system-requirements)

- [💻 Run the Notebooks](#-run-the-notebooks)

- [To Launch a Single Notebook](#to-launch-a-single-notebook)

- [To Launch all Notebooks](#to-launch-all-notebooks)

- [🧹 Cleaning Up](#-cleaning-up)

- [⚠️ Troubleshooting](#️-troubleshooting)

- [🧑💻 Contributors](#-contributors)

- [❓ FAQ](#-faq)

[]()

## 📝 Installation Guide

OpenVINO Notebooks require Python and Git. To get started, select the guide for your operating system or environment:

| [Windows](https://github.com/openvinotoolkit/openvino_notebooks/wiki/Windows) | [Ubuntu](https://github.com/openvinotoolkit/openvino_notebooks/wiki/Ubuntu) | [macOS](https://github.com/openvinotoolkit/openvino_notebooks/wiki/macOS) | [Red Hat](https://github.com/openvinotoolkit/openvino_notebooks/wiki/Red-Hat-and-CentOS) | [CentOS](https://github.com/openvinotoolkit/openvino_notebooks/wiki/Red-Hat-and-CentOS) | [Azure ML](https://github.com/openvinotoolkit/openvino_notebooks/wiki/AzureML) | [Docker](https://github.com/openvinotoolkit/openvino_notebooks/wiki/Docker) | [Amazon SageMaker](https://github.com/openvinotoolkit/openvino_notebooks/wiki/SageMaker) |

| ----------------------------------------------------------------------------- | --------------------------------------------------------------------------- | ------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------- | --------------------------------------------------------------------------------------- | ------------------------------------------------------------------------------ | --------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------- |

[]()

## 🚀 Getting Started

The Jupyter notebooks are categorized into four classes, select one related to your needs or give them all a try. Good Luck!

**NOTE: The main branch of this repository was updated to support the new OpenVINO 2023.3 release.** To upgrade to the new release version, please run `pip install --upgrade -r requirements.txt` in your `openvino_env` virtual environment. If you need to install for the first time, see the [Installation Guide](#-installation-guide) section below. If you wish to use the previous release version of OpenVINO, please checkout the [2023.2 branch](https://github.com/openvinotoolkit/openvino_notebooks/tree/2023.2). If you wish to use the previous Long Term Support (LTS) version of OpenVINO check out the [2022.3 branch](https://github.com/openvinotoolkit/openvino_notebooks/tree/2022.3).

If you need help, please start a GitHub [Discussion](https://github.com/openvinotoolkit/openvino_notebooks/discussions).

### 💻 First steps

Brief tutorials that demonstrate how to use OpenVINO's Python API for inference.

| [001-hello-world](notebooks/001-hello-world/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F001-hello-world%2F001-hello-world.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/001-hello-world/001-hello-world.ipynb) | [002-openvino-api](notebooks/002-openvino-api/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F002-openvino-api%2F002-openvino-api.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/002-openvino-api/002-openvino-api.ipynb) | [003-hello-segmentation](notebooks/003-hello-segmentation/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F003-hello-segmentation%2F003-hello-segmentation.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/003-hello-segmentation/003-hello-segmentation.ipynb) | [004-hello-detection](notebooks/004-hello-detection/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F004-hello-detection%2F004-hello-detection.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/004-hello-detection/004-hello-detection.ipynb) |

| -------------------------------------------------------------------------------- | --------------------------------------------------------------------------- | ------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------- |

| Classify an image with OpenVINO | Learn the OpenVINO Python API | Semantic segmentation with OpenVINO | Text detection with OpenVINO |

|  |

|  |

|  |

|  |

### ⌚ Convert & Optimize

Tutorials that explain how to optimize and quantize models with OpenVINO tools.

| Notebook | Description |

| :-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: |:----------------------------------------------------------------------------------------------------------------------------|

| [101-tensorflow-classification-to-openvino](notebooks/101-tensorflow-classification-to-openvino/)

|

### ⌚ Convert & Optimize

Tutorials that explain how to optimize and quantize models with OpenVINO tools.

| Notebook | Description |

| :-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: |:----------------------------------------------------------------------------------------------------------------------------|

| [101-tensorflow-classification-to-openvino](notebooks/101-tensorflow-classification-to-openvino/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F101-tensorflow-classification-to-openvino%2F101-tensorflow-classification-to-openvino.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/101-tensorflow-classification-to-openvino/101-tensorflow-classification-to-openvino.ipynb) | Convert TensorFlow models to OpenVINO IR |

| [102-pytorch-to-openvino](notebooks/102-pytorch-to-openvino/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/102-pytorch-to-openvino/102-pytorch-to-openvino.ipynb) | Convert PyTorch models to OpenVINO IR |

| [103-paddle-to-openvino](notebooks/103-paddle-to-openvino/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F103-paddle-to-openvino%2F103-paddle-to-openvino-classification.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/103-paddle-to-openvino/103-paddle-to-openvino-classification.ipynb) | Convert PaddlePaddle models to OpenVINO IR |

| [104-model-tools](notebooks/104-model-tools/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F104-model-tools%2F104-model-tools.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/104-model-tools/104-model-tools.ipynb) | Download, convert and benchmark models from Open Model Zoo |

| [105-language-quantize-bert](notebooks/105-language-quantize-bert/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/105-language-quantize-bert/105-language-quantize-bert.ipynb) | Optimize and quantize a pre-trained BERT model |

| [106-auto-device](notebooks/106-auto-device/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F106-auto-device%2F106-auto-device.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/106-auto-device/106-auto-device.ipynb) | Demonstrate how to use AUTO Device |

| [107-speech-recognition-quantization](notebooks/107-speech-recognition-quantization/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/107-speech-recognition-quantization/107-speech-recognition-quantization-data2vec.ipynb) | Quantize speech recognition models using NNCF PTQ API |

| [108-gpu-device](notebooks/108-gpu-device/) | Working with GPUs in OpenVINO™ |

| [109-performance-tricks](notebooks/109-performance-tricks/)| Performance tricks in OpenVINO™ |

| [110-ct-segmentation-quantize](notebooks/110-ct-segmentation-quantize/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F110-ct-segmentation-quantize%2F110-ct-scan-live-inference.ipynb) | Quantize a kidney segmentation model and show live inference |

| [112-pytorch-post-training-quantization-nncf](notebooks/112-pytorch-post-training-quantization-nncf/) | Use Neural Network Compression Framework (NNCF) to quantize PyTorch model in post-training mode (without model fine-tuning) |

| [113-image-classification-quantization](notebooks/113-image-classification-quantization/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F113-image-classification-quantization%2F113-image-classification-quantization.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/113-image-classification-quantization/113-image-classification-quantization.ipynb) | Quantize Image Classification model |

| [115-async-api](notebooks/115-async-api/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F115-async-api%2F115-async-api.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/115-async-api/115-async-api.ipynb) | Use Asynchronous Execution to Improve Data Pipelining | |

| [116-sparsity-optimization](notebooks/116-sparsity-optimization/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/116-sparsity-optimization/116-sparsity-optimization.ipynb) | Improve performance of sparse Transformer models |

| [117-model-server](notebooks/117-model-server/)| Introduction to model serving with OpenVINO™ Model Server (OVMS) |

| [118-optimize-preprocessing](notebooks/118-optimize-preprocessing/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/118-optimize-preprocessing/118-optimize-preprocessing.ipynb) | Improve performance of image preprocessing step |

| [119-tflite-to-openvino](notebooks/119-tflite-to-openvino/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/119-tflite-to-openvino/119-tflite-to-openvino.ipynb) | Convert TensorFlow Lite models to OpenVINO IR | |

| [120-tensorflow-object-detection-to-openvino](notebooks/120-tensorflow-object-detection-to-openvino/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F120-tensorflow-object-detection-to-openvino%2F120-tensorflow-object-detection-to-openvino.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/120-tensorflow-object-detection-to-openvino/120-tensorflow-object-detection-to-openvino.ipynb) | Convert TensorFlow Object Detection models to OpenVINO IR |

| [121-convert-to-openvino](notebooks/121-convert-to-openvino/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F121-convert-to-openvino%2F121-convert-to-openvino.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/121-convert-to-openvino/121-convert-to-openvino.ipynb) | Learn OpenVINO model conversion API |

| [122-quantizing-model-with-accuracy-control](notebooks/122-quantizing-model-with-accuracy-control/)| Quantizing with Accuracy Control using NNCF |

| [123-detectron2-to-openvino](notebooks/123-detectron2-to-openvino/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F123-detectron2-to-openvino%2F123-detectron2-to-openvino.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/123-detectron2-to-openvino/123-detectron2-to-openvino.ipynb) | Convert Detectron2 models to OpenVINO IR |

| [124-hugging-face-hub](notebooks/124-hugging-face-hub/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F124-hugging-face-hub%2F124-hugging-face-hub.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/124-hugging-face-hub/124-hugging-face-hub.ipynb) | Load models from Hugging Face Model Hub with OpenVINO™ |

| [125-torchvision-zoo-to-openvino](notebooks/125-torchvision-zoo-to-openvino/)

Classification

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F125-torchvision-zoo-to-openvino%2F125-convnext-classification.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/125-torchvision-zoo-to-openvino/125-convnext-classification.ipynb)

Semantic Segmentation

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F125-torchvision-zoo-to-openvino%2F125-lraspp-segmentation.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/125-torchvision-zoo-to-openvino/125-lraspp-segmentation.ipynb)| Convert torchvision classification and semantic segmentation models to OpenVINO IR |

| [126-tensorflow-hub](notebooks/126-tensorflow-hub/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F126-tensorflow-hub%2F126-tensorflow-hub.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/126-tensorflow-hub/126-tensorflow-hub.ipynb) | Convert TensorFlow Hub models to OpenVINO IR |

| [127-big-transfer-quantization](notebooks/127-big-transfer-quantization/)| BiT Image Classification OpenVINO IR model Quantization with NNCF |

| [128-openvino-tokenizers](notebooks/128-openvino-tokenizers/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F128-opnevino-tokenizers%2F128-opnevino-tokenizers.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/128-openvino-tokenizers/128-opnevino-tokenizers.ipynb)| Incorporate Text Processing Into OpenVINO Pipelines with OpenVINO Tokenizers |

### 🎯 Model Demos

Demos that demonstrate inference on a particular model.

| Notebook | Description | Preview |

| :-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: | :------------------------------------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: |

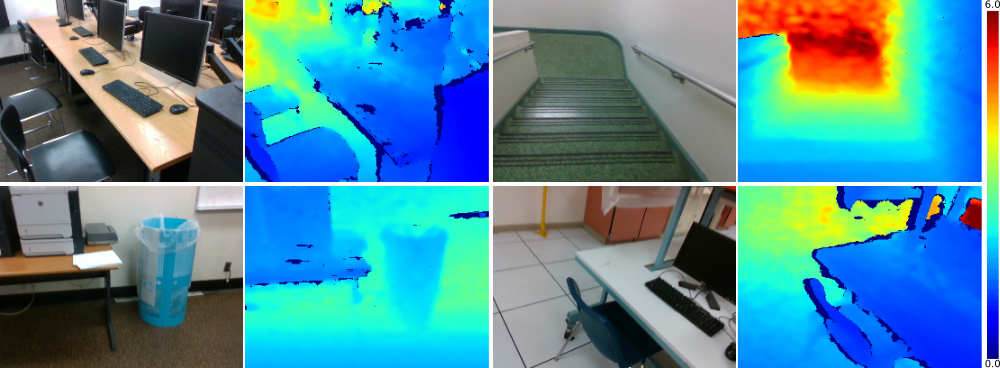

| [201-vision-monodepth](notebooks/201-vision-monodepth/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F201-vision-monodepth%2F201-vision-monodepth.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/201-vision-monodepth/201-vision-monodepth.ipynb) | Monocular depth estimation with images and video |  |

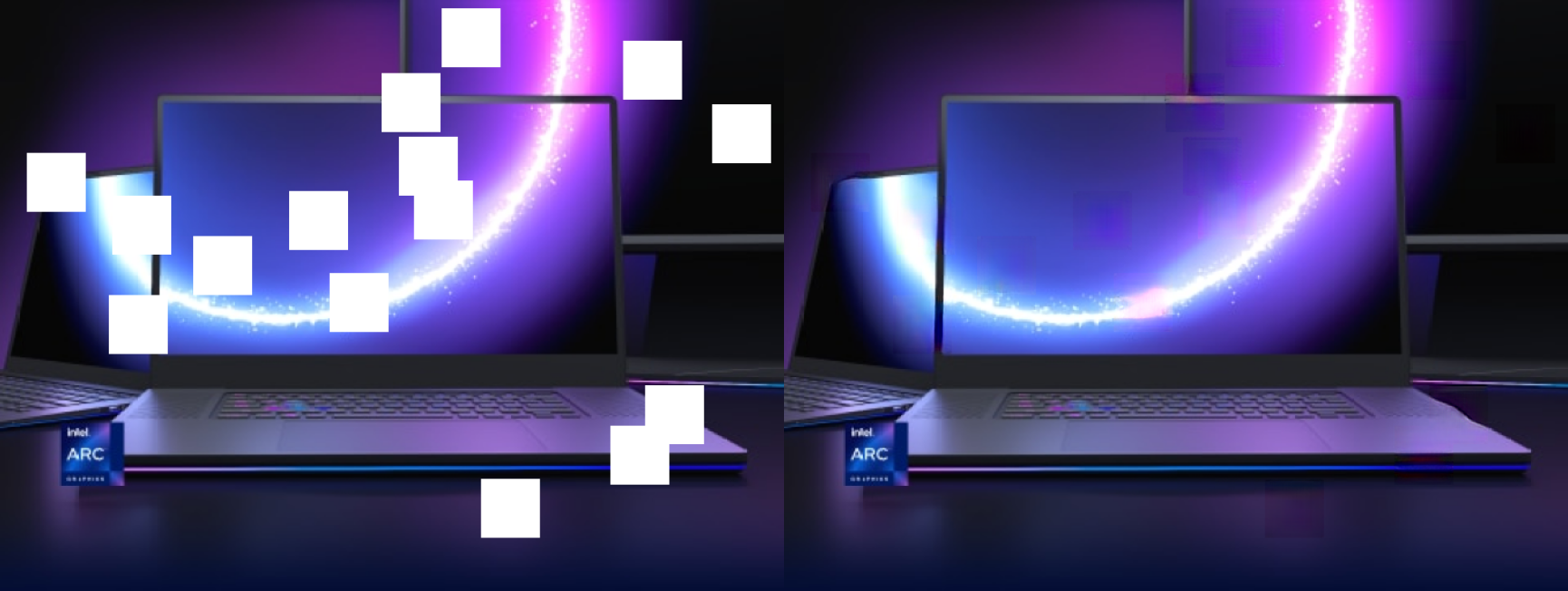

| [202-vision-superresolution-image](notebooks/202-vision-superresolution/)

|

| [202-vision-superresolution-image](notebooks/202-vision-superresolution/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F202-vision-superresolution%2F202-vision-superresolution-image.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/202-vision-superresolution/202-vision-superresolution-image.ipynb) | Upscale raw images with a super resolution model |  →

→ |

| [202-vision-superresolution-video](notebooks/202-vision-superresolution/)

|

| [202-vision-superresolution-video](notebooks/202-vision-superresolution/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F202-vision-superresolution%2F202-vision-superresolution-video.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/202-vision-superresolution/202-vision-superresolution-video.ipynb) | Turn 360p into 1080p video using a super resolution model |  →

→ |

| [203-meter-reader](notebooks/203-meter-reader/)

|

| [203-meter-reader](notebooks/203-meter-reader/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F203-meter-reader%2F203-meter-reader.ipynb) | PaddlePaddle pre-trained models to read industrial meter's value |  |

| [204-segmenter-semantic-segmentation](notebooks/204-segmenter-semantic-segmentation/)

|

| [204-segmenter-semantic-segmentation](notebooks/204-segmenter-semantic-segmentation/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/204-segmenter-semantic-segmentation/204-segmenter-semantic-segmentation.ipynb) | Semantic Segmentation with OpenVINO™ using Segmenter |  |

| [205-vision-background-removal](notebooks/205-vision-background-removal/)

|

| [205-vision-background-removal](notebooks/205-vision-background-removal/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F205-vision-background-removal%2F205-vision-background-removal.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/205-vision-background-removal/205-vision-background-removal.ipynb) | Remove and replace the background in an image using salient object detection |  |

| [206-vision-paddlegan-anime](notebooks/206-vision-paddlegan-anime/)

|

| [206-vision-paddlegan-anime](notebooks/206-vision-paddlegan-anime/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/206-vision-paddlegan-anime/206-vision-paddlegan-anime.ipynb) | Turn an image into anime using a GAN |  →

→ |

| [207-vision-paddlegan-superresolution](notebooks/207-vision-paddlegan-superresolution/)

|

| [207-vision-paddlegan-superresolution](notebooks/207-vision-paddlegan-superresolution/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/207-vision-paddlegan-superresolution/207-vision-paddlegan-superresolution.ipynb) | Upscale small images with superresolution using a PaddleGAN model| |

| [208-optical-character-recognition](notebooks/208-optical-character-recognition/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/208-optical-character-recognition/208-optical-character-recognition.ipynb) | Annotate text on images using text recognition resnet |  |

| [209-handwritten-ocr](notebooks/209-handwritten-ocr/)

|

| [209-handwritten-ocr](notebooks/209-handwritten-ocr/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F209-handwritten-ocr%2F209-handwritten-ocr.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/209-handwritten-ocr/209-handwritten-ocr.ipynb) | OCR for handwritten simplified Chinese and Japanese |

的人不一了是他有为在责新中任自之我们 |

| [210-slowfast-video-recognition](notebooks/210-slowfast-video-recognition/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F210-slowfast-video-recognition%2F210-slowfast-video-recognition.ipynb) | Video Recognition using SlowFast and OpenVINO™ |  |

| [211-speech-to-text](notebooks/211-speech-to-text/)

|

| [211-speech-to-text](notebooks/211-speech-to-text/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F211-speech-to-text%2F211-speech-to-text.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/211-speech-to-text/211-speech-to-text.ipynb) | Run inference on speech-to-text recognition model |  |

| [212-pyannote-speaker-diarization](notebooks/212-pyannote-speaker-diarization/)

|

| [212-pyannote-speaker-diarization](notebooks/212-pyannote-speaker-diarization/)

| Run inference on speaker diarization pipeline |  |

| [213-question-answering](notebooks/213-question-answering/)

|

| [213-question-answering](notebooks/213-question-answering/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F213-question-answering%2F213-question-answering.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/213-question-answering/213-question-answering.ipynb) | Answer your questions basing on a context |  |

| [214-grammar-correction](notebooks/214-grammar-correction/) | Grammatical Error Correction with OpenVINO | **Input text**: `I'm working in campany for last 2 yeas.`

|

| [214-grammar-correction](notebooks/214-grammar-correction/) | Grammatical Error Correction with OpenVINO | **Input text**: `I'm working in campany for last 2 yeas.`

**Generated text**: `I'm working in a company for the last 2 years.` |

| [215-image-inpainting](notebooks/215-image-inpainting/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F215-image-inpainting%2F215-image-inpainting.ipynb)| Fill missing pixels with image in-painting |  |

| [216-attention-center](notebooks/216-attention-center/)

|

| [216-attention-center](notebooks/216-attention-center/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/216-attention-center/216-attention-center.ipynb) | The attention center model with OpenVINO™ | |

| [218-vehicle-detection-and-recognition](notebooks/218-vehicle-detection-and-recognition/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F218-vehicle-detection-and-recognition%2F218-vehicle-detection-and-recognition.ipynb) | Use pre-trained models to detect and recognize vehicles and their attributes with OpenVINO |  |

| [219-knowledge-graphs-conve](notebooks/219-knowledge-graphs-conve/)

|

| [219-knowledge-graphs-conve](notebooks/219-knowledge-graphs-conve/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F219-knowledge-graphs-conve%2F219-knowledge-graphs-conve.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/219-knowledge-graphs-conve/219-knowledge-graphs-conve.ipynb) | Optimize the knowledge graph embeddings model (ConvE) with OpenVINO ||

| [220-books-alignment-labse](notebooks/220-cross-lingual-books-alignment/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F220-cross-lingual-books-alignment%2F220-cross-lingual-books-alignment.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/220-cross-lingual-books-alignment/220-cross-lingual-books-alignment.ipynb)| Cross-lingual Books Alignment With Transformers and OpenVINO™ | |

| [221-machine-translation](notebooks/221-machine-translation)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F221-machine-translation%2F221-machine-translation.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/221-machine-translation/221-machine-translation.ipynb) | Real-time translation from English to German | |

| [222-vision-image-colorization](notebooks/222-vision-image-colorization/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F222-vision-image-colorization%2F222-vision-image-colorization.ipynb) | Use pre-trained models to colorize black \& white images using OpenVINO |  |

| [223-text-prediction](notebooks/223-text-prediction/)

|

| [223-text-prediction](notebooks/223-text-prediction/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/223-text-prediction/223-text-prediction.ipynb) | Use pretrained models to perform text prediction on an input sequence |  |

| [224-3D-segmentation-point-clouds](notebooks/224-3D-segmentation-point-clouds/)

|

| [224-3D-segmentation-point-clouds](notebooks/224-3D-segmentation-point-clouds/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/224-3D-segmentation-point-clouds/224-3D-segmentation-point-clouds.ipynb) | Process point cloud data and run 3D Part Segmentation with OpenVINO |  |

| [225-stable-diffusion-text-to-image](notebooks/225-stable-diffusion-text-to-image)

|

| [225-stable-diffusion-text-to-image](notebooks/225-stable-diffusion-text-to-image)

| Text-to-image generation with Stable Diffusion method |  |

| [226-yolov7-optimization](notebooks/226-yolov7-optimization/)

|

| [226-yolov7-optimization](notebooks/226-yolov7-optimization/)

| Optimize YOLOv7 using NNCF PTQ API |  |

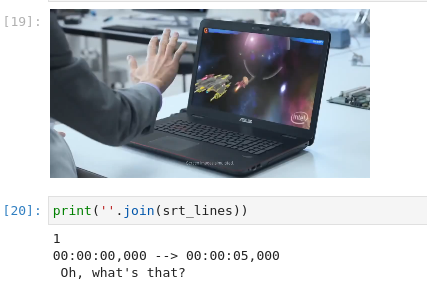

| [227-whisper-subtitles-generation](notebooks/227-whisper-subtitles-generation/)

|

| [227-whisper-subtitles-generation](notebooks/227-whisper-subtitles-generation/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/227-whisper-subtitles-generation/227-whisper-convert.ipynb) | Generate subtitles for video with OpenAI Whisper and OpenVINO |  |

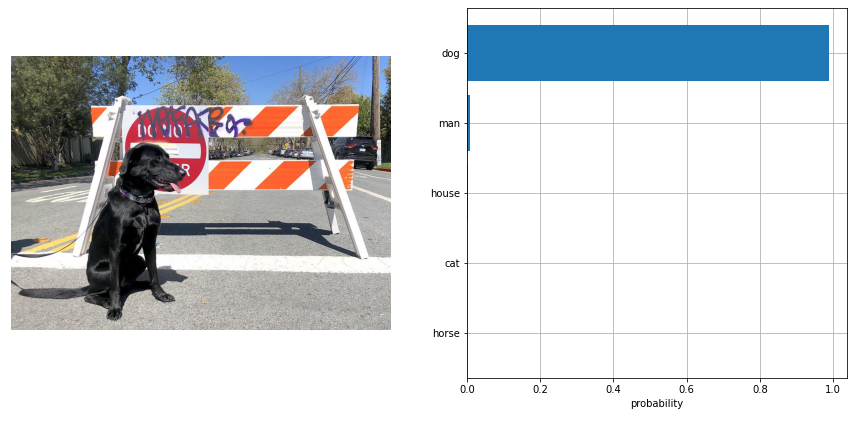

| [228-clip-zero-shot-image-classification](notebooks/228-clip-zero-shot-image-classification)

|

| [228-clip-zero-shot-image-classification](notebooks/228-clip-zero-shot-image-classification)

| Perform Zero-shot Image Classification with CLIP and OpenVINO |  |

| [229-distilbert-sequence-classification](notebooks/229-distilbert-sequence-classification/)

|

| [229-distilbert-sequence-classification](notebooks/229-distilbert-sequence-classification/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F229-distilbert-sequence-classification%2F229-distilbert-sequence-classification.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/229-distilbert-sequence-classification/229-distilbert-sequence-classification.ipynb) | Sequence Classification with OpenVINO |  |

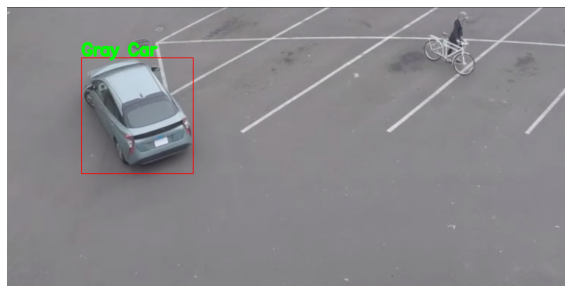

| [230-yolov8-object-detection](notebooks/230-yolov8-optimization/)

|

| [230-yolov8-object-detection](notebooks/230-yolov8-optimization/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/230-yolov8-optimization/230-yolov8-object-detection.ipynb) | Optimize YOLOv8 object detection using NNCF PTQ API |  |

| [230-yolov8-instance-segmentation](notebooks/230-yolov8-optimization/)

|

| [230-yolov8-instance-segmentation](notebooks/230-yolov8-optimization/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/230-yolov8-optimization/230-yolov8-instance-segmentation.ipynb) | Optimize YOLOv8 instance segmentation using NNCF PTQ API |  |

| [230-yolov8-keypoint-detection](notebooks/230-yolov8-optimization/)

|

| [230-yolov8-keypoint-detection](notebooks/230-yolov8-optimization/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/230-yolov8-optimization/230-yolov8-keypoint-detection.ipynb) | Optimize YOLOv8 keypoint detection using NNCF PTQ API |  |

| [231-instruct-pix2pix-image-editing](notebooks/231-instruct-pix2pix-image-editing/)

|

| [231-instruct-pix2pix-image-editing](notebooks/231-instruct-pix2pix-image-editing/)

| Image editing with InstructPix2Pix |  |

| [232-clip-language-saliency-map](notebooks/232-clip-language-saliency-map/)

|

| [232-clip-language-saliency-map](notebooks/232-clip-language-saliency-map/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/232-clip-language-saliency-map/232-clip-language-saliency-map.ipynb) | Language-Visual Saliency with CLIP and OpenVINO™ |  |

| [233-blip-visual-language-processing](notebooks/233-blip-visual-language-processing/)

|

| [233-blip-visual-language-processing](notebooks/233-blip-visual-language-processing/)

| Visual Question Answering and Image Captioning using BLIP and OpenVINO™ |  |

| [234-encodec-audio-compression](notebooks/234-encodec-audio-compression/)

|

| [234-encodec-audio-compression](notebooks/234-encodec-audio-compression/)

| Audio compression with EnCodec and OpenVINO™ |  |

| [235-controlnet-stable-diffusion](notebooks/235-controlnet-stable-diffusion/)

|

| [235-controlnet-stable-diffusion](notebooks/235-controlnet-stable-diffusion/)

| A Text-to-Image Generation with ControlNet Conditioning and OpenVINO™ |  |

| [236-stable-diffusion-v2](notebooks/236-stable-diffusion-v2/)

|

| [236-stable-diffusion-v2](notebooks/236-stable-diffusion-v2/)

| Text-to-Image Generation and Infinite Zoom with Stable Diffusion v2 and OpenVINO™ |  |

| [237-segment-anything](notebooks/237-segment-anything/)

|

| [237-segment-anything](notebooks/237-segment-anything/)

| Prompt based segmentation using Segment Anything and OpenVINO™.|  |

| [238-deep-floyd-if](notebooks/238-deepfloyd-if/)

|

| [238-deep-floyd-if](notebooks/238-deepfloyd-if/)

| Text-to-Image Generation with DeepFloyd IF and OpenVINO™ |  |

| [239-image-bind](notebooks/239-image-bind/)

|

| [239-image-bind](notebooks/239-image-bind/)

| Binding multimodal data using ImageBind and OpenVINO™ |  |

| [240-dolly-2-instruction-following](notebooks/240-dolly-2-instruction-following/)

|

| [240-dolly-2-instruction-following](notebooks/240-dolly-2-instruction-following/)

| Instruction following using Databricks Dolly 2.0 and OpenVINO™ |  |

| [241-riffusion-text-to-music](notebooks/241-riffusion-text-to-music/)

|

| [241-riffusion-text-to-music](notebooks/241-riffusion-text-to-music/)

| Text-to-Music generation using Riffusion and OpenVINO™ |  |

| [242-freevc-voice-conversion](notebooks/242-freevc-voice-conversion/)

|

| [242-freevc-voice-conversion](notebooks/242-freevc-voice-conversion/)

| High-Quality Text-Free One-Shot Voice Conversion with FreeVC and OpenVINO™ ||

| [243-tflite-selfie-segmentation](notebooks/243-tflite-selfie-segmentation/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F243-tflite-selfie-segmentation%2F243-tflite-selfie-segmentation.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/243-tflite-selfie-segmentation/243-tflite-selfie-segmentation.ipynb)| Selfie Segmentation using TFLite and OpenVINO™ |  |

| [244-named-entity-recognition](notebooks/244-named-entity-recognition/)

|

| [244-named-entity-recognition](notebooks/244-named-entity-recognition/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/244-named-entity-recognition/244-named-entity-recognition.ipynb) | Named entity recognition with OpenVINO™ | |

| [245-typo-detector](notebooks/245-typo-detector/)

| English Typo Detection in sentences with OpenVINO™ |  |

| [246-depth-estimation-videpth](notebooks/246-depth-estimation-videpth/)

|

| [246-depth-estimation-videpth](notebooks/246-depth-estimation-videpth/)

| Monocular Visual-Inertial Depth Estimation with OpenVINO™ |  |

| [247-code-language-id](notebooks/247-code-language-id/247-code-language-id.ipynb)

|

| [247-code-language-id](notebooks/247-code-language-id/247-code-language-id.ipynb)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F247-code-language-id%2F247-code-language-id.ipynb) | Identify the programming language used in an arbitrary code snippet | ||

| [248-stable-diffusion-xl](notebooks/248-stable-diffusion-xl/)

| Image generation with Stable Diffusion XL and OpenVINO™ |  |

| [249-oneformer-segmentation](notebooks/249-oneformer-segmentation/)

|

| [249-oneformer-segmentation](notebooks/249-oneformer-segmentation/)

| Universal segmentation with OneFormer and OpenVINO™ |  |

| [250-music-generation](notebooks/250-music-generation/)

|

| [250-music-generation](notebooks/250-music-generation/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F250-music-generation%2F250-music-generation.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/250-music-generation/250-music-generation.ipynb) | Controllable Music Generation with MusicGen and OpenVINO™ |  |

| [251-tiny-sd-image-generation](notebooks/251-tiny-sd-image-generation/)

|

| [251-tiny-sd-image-generation](notebooks/251-tiny-sd-image-generation/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/251-tiny-sd-image-generation/251-tiny-sd-image-generation.ipynb) | Image Generation with Tiny-SD and OpenVINO™ |  |

| [252-fastcomposer-image-generation](notebooks/252-fastcomposer-image-generation/)

|

| [252-fastcomposer-image-generation](notebooks/252-fastcomposer-image-generation/)

| Image generation with FastComposer and OpenVINO™ | |

| [253-zeroscope-text2video](notebooks/253-zeroscope-text2video)

| Text-to-video synthesis with ZeroScope and OpenVINO™ | A panda eating bamboo on a rock  |

| [254-llm-chatbot](notebooks/254-llm-chatbot)

|

| [254-llm-chatbot](notebooks/254-llm-chatbot)

| Create LLM-powered Chatbot using OpenVINO™ |  |

| [255-mms-massively-multilingual-speech](notebooks/255-mms-massively-multilingual-speech/)

|

| [255-mms-massively-multilingual-speech](notebooks/255-mms-massively-multilingual-speech/)

| MMS: Scaling Speech Technology to 1000+ languages with OpenVINO™ | |

| [256-bark-text-to-audio](notebooks/256-bark-text-to-audio)

| Text-to-Speech generation using Bark and OpenVINO™ |  |

| [257-llava-multimodal-chatbot](notebooks/257-llava-multimodal-chatbot)

|

| [257-llava-multimodal-chatbot](notebooks/257-llava-multimodal-chatbot)

| Visual-language assistant with LLaVA and OpenVINO™ |  |

| [258-blip-diffusion-subject-generation](notebooks/258-blip-diffusion-subject-generation)

|

| [258-blip-diffusion-subject-generation](notebooks/258-blip-diffusion-subject-generation)

| Subject-driven image generation and editing using BLIP Diffusion and OpenVINO™ |  |

| [259-decidiffusion-image-generation](notebooks/259-decidiffusion-image-generation)

|

| [259-decidiffusion-image-generation](notebooks/259-decidiffusion-image-generation)

| Image generation with DeciDiffusion and OpenVINO™ |  |

| [260-pix2struct-docvqa](notebooks/260-pix2struct-docvqa)

|

| [260-pix2struct-docvqa](notebooks/260-pix2struct-docvqa)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/260-pix2struct-docvqa/260-pix2struct-docvqa.ipynb)

| Document Visual Question Answering using Pix2Struct and OpenVINO™ |  | [261-fast-segment-anything](notebooks/261-fast-segment-anything/)

| [261-fast-segment-anything](notebooks/261-fast-segment-anything/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F261-fast-segment-anything%2F261-fast-segment-anything.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/261-fast-segment-anything/261-fast-segment-anything.ipynb) | Object segmentations with FastSAM and OpenVINO™ |  |

| [262-softvc-voice-conversion](notebooks/262-softvc-voice-conversion)

|

| [262-softvc-voice-conversion](notebooks/262-softvc-voice-conversion)

| SoftVC VITS Singing Voice Conversion and OpenVINO™ | |

| [263-latent-consistency-models-image-generation](notebooks/263-latent-consistency-models-image-generation)

| Image generation with Latent Consistency Models (LCM) and OpenVINO™ |  |

| [264-qrcode-monster](notebooks/264-qrcode-monster/)

|

| [264-qrcode-monster](notebooks/264-qrcode-monster/)

| Generate creative QR codes with ControlNet QR Code Monster and OpenVINO™ |  |

| [265-wuerstchen-image-generation](notebooks/265-wuerstchen-image-generation)

|

| [265-wuerstchen-image-generation](notebooks/265-wuerstchen-image-generation)

| Text-to-image generation with Würstchen and OpenVINO™ |  |

| [266-speculative-sampling](notebooks/266-speculative-sampling)

|

| [266-speculative-sampling](notebooks/266-speculative-sampling)

| Text Generation via Speculative Sampling, KV Caching, and OpenVINO™ |  |

| [267-distil-whisper-asr](notebooks/267-distil-whisper-asr)

|

| [267-distil-whisper-asr](notebooks/267-distil-whisper-asr)

| Automatic speech recognition using Distil-Whisper and OpenVINO™ | |

| [268-table-question-answering](notebooks/268-table-question-answering)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/268-table-question-answering/268-table-question-answering.ipynb)

| Table Question Answering using TAPAS and OpenVINO™ ||

| [269-film-slowmo](notebooks/269-film-slowmo)

| Frame interpolation with FILM and OpenVINO™ |  |

| [270-sound-generation-audioldm2](notebooks/270-sound-generation-audioldm2/)

|

| [270-sound-generation-audioldm2](notebooks/270-sound-generation-audioldm2/)

| Sound Generation with AudioLDM2 and OpenVINO™ |  |

| [271-sdxl-turbo](notebooks/271-sdxl-turbo/)

|

| [271-sdxl-turbo](notebooks/271-sdxl-turbo/)

| Single-step image generation using SDXL-turbo and OpenVINO |  |

| [272-paint-by-example](notebooks/272-paint-by-example/)

|

| [272-paint-by-example](notebooks/272-paint-by-example/)

| Exemplar based image editing using diffusion models, [Paint-by-Example](https://github.com/Fantasy-Studio/Paint-by-Example), and OpenVINO™ |  |

| [273-stable-zephyr-3b-chatbot](notebooks/273-stable-zephyr-3b-chatbot)

|

| [273-stable-zephyr-3b-chatbot](notebooks/273-stable-zephyr-3b-chatbot)

| Use Stable-Zephyr as chatbot assistant with OpenVINO |  |

| [274-efficient-sam](notebooks/274-efficient-sam/)

|

| [274-efficient-sam](notebooks/274-efficient-sam/)

| Object segmentation with EfficientSAM and OpenVINO™ |  |

| [275-llm-question-answering](notebooks/275-llm-question-answering)

|

| [275-llm-question-answering](notebooks/275-llm-question-answering)

| LLM Instruction following pipeline |  |

| [276-stable-diffusion-torchdynamo-backend](notebooks/276-stable-diffusion-torchdynamo-backend/)

|

| [276-stable-diffusion-torchdynamo-backend](notebooks/276-stable-diffusion-torchdynamo-backend/)

| Image generation with Stable Diffusion and OpenVINO™ `torch.compile` feature |  |

| [277-amused-lightweight-text-to-image](notebooks/277-amused-lightweight-text-to-image)

|

| [277-amused-lightweight-text-to-image](notebooks/277-amused-lightweight-text-to-image)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/277-amused-lightweight-text-to-image/277-amused-lightweight-text-to-image.ipynb)

| Lightweight image generation with aMUSEd and OpenVINO™ |  |

| [278-stable-diffusion-ip-adapter](notebooks/278-stable-diffusion-ip-adapter)

|

| [278-stable-diffusion-ip-adapter](notebooks/278-stable-diffusion-ip-adapter)

| Image conditioning in Stable Diffusion pipeline using IP-Adapter |  |

| [279-mobilevlm-language-assistant](notebooks/279-mobilevlm-language-assistant)

|

| [279-mobilevlm-language-assistant](notebooks/279-mobilevlm-language-assistant)

| Mobile language assistant with MobileVLM and OpenVINO | |

| [280-depth-anything](notebooks/280-depth-anything)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F280-depth-anythingh%2F280-depth-anything.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/280-depth-anything/280-depth-anything.ipynb) | Monocular Depth Estimation with DepthAnything and OpenVINO |  |

| [281-kosmos2-multimodal-large-language-model](notebooks/281-kosmos2-multimodal-large-language-model)

|

| [281-kosmos2-multimodal-large-language-model](notebooks/281-kosmos2-multimodal-large-language-model)

| Kosmos-2: Multimodal Large Language Model and OpenVINO™ |  |

| [282-siglip-zero-shot-image-classification](notebooks/282-siglip-zero-shot-image-classification)

|

| [282-siglip-zero-shot-image-classification](notebooks/282-siglip-zero-shot-image-classification)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/282-siglip-zero-shot-image-classification/282-siglip-zero-shot-image-classification.ipynb) | Zero-shot Image Classification with SigLIP |  |

| [283-photo-maker](notebooks/283-photo-maker)

|

| [283-photo-maker](notebooks/283-photo-maker)

| Text-to-image generation using PhotoMaker and OpenVINO |  |

| [284-openvoice](notebooks/284-openvoice)

|

| [284-openvoice](notebooks/284-openvoice)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F284-openvoice%2F284-openvoice.ipynb)[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/284-openvoice/284-openvoice.ipynb) | OpenVoice a versatile instant voice tone transferring and generating speech in various languages. | |

| [285-surya-line-level-text-detection](notebooks/285-surya-line-level-text-detection)

|

| [285-surya-line-level-text-detection](notebooks/285-surya-line-level-text-detection)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/285-surya-line-level-text-detection/285-surya-line-level-text-detection.ipynb) | Line-level text detection with Surya |  |

| [286-instant-id](notebooks/286-instant-id)

|

| [286-instant-id](notebooks/286-instant-id)

| InstantID: Zero-shot Identity-Preserving Image Generation using OpenVINO|  |

| [287-yolov9-optimization](notebooks/287-yolov9-optimization)

|

| [287-yolov9-optimization](notebooks/287-yolov9-optimization)

| Optimize YOLOv9 using NNCF |  |

### 🏃 Model Training

Tutorials that include code to train neural networks.

| Notebook | Description | Preview |

| :-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: | :------------------------------------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: |

| [301-tensorflow-training-openvino](notebooks/301-tensorflow-training-openvino/) | Train a flower classification model from TensorFlow, then convert to OpenVINO IR |

|

### 🏃 Model Training

Tutorials that include code to train neural networks.

| Notebook | Description | Preview |

| :-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: | :------------------------------------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: |

| [301-tensorflow-training-openvino](notebooks/301-tensorflow-training-openvino/) | Train a flower classification model from TensorFlow, then convert to OpenVINO IR |  |

| [301-tensorflow-training-openvino-nncf](notebooks/301-tensorflow-training-openvino/) | Use Neural Network Compression Framework (NNCF) to quantize model from TensorFlow | |

| [302-pytorch-quantization-aware-training](notebooks/302-pytorch-quantization-aware-training/) | Use Neural Network Compression Framework (NNCF) to quantize PyTorch model | |

| [305-tensorflow-quantization-aware-training](notebooks/305-tensorflow-quantization-aware-training/)

|

| [301-tensorflow-training-openvino-nncf](notebooks/301-tensorflow-training-openvino/) | Use Neural Network Compression Framework (NNCF) to quantize model from TensorFlow | |

| [302-pytorch-quantization-aware-training](notebooks/302-pytorch-quantization-aware-training/) | Use Neural Network Compression Framework (NNCF) to quantize PyTorch model | |

| [305-tensorflow-quantization-aware-training](notebooks/305-tensorflow-quantization-aware-training/)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/305-tensorflow-quantization-aware-training/305-tensorflow-quantization-aware-training.ipynb) | Use Neural Network Compression Framework (NNCF) to quantize TensorFlow model | |

### 📺 Live Demos

Live inference demos that run on a webcam or video files.

| Notebook | Description | Preview |

| :-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: | :------------------------------------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------: |

| [401-object-detection-webcam](notebooks/401-object-detection-webcam/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F401-object-detection-webcam%2F401-object-detection.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/401-object-detection-webcam/401-object-detection.ipynb) | Object detection with a webcam or video file |  |

| [402-pose-estimation-webcam](notebooks/402-pose-estimation-webcam/)

|

| [402-pose-estimation-webcam](notebooks/402-pose-estimation-webcam/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F402-pose-estimation-webcam%2F402-pose-estimation.ipynb) | Human pose estimation with a webcam or video file |  |

| [403-action-recognition-webcam](notebooks/403-action-recognition-webcam/)

|

| [403-action-recognition-webcam](notebooks/403-action-recognition-webcam/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F403-action-recognition-webcam%2F403-action-recognition-webcam.ipynb) | Human action recognition with a webcam or video file |  |

| [404-style-transfer-webcam](notebooks/404-style-transfer-webcam/)

|

| [404-style-transfer-webcam](notebooks/404-style-transfer-webcam/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F404-style-transfer-webcam%2F404-style-transfer.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/404-style-transfer-webcam/404-style-transfer.ipynb) | Style Transfer with a webcam or video file |  |

| [405-paddle-ocr-webcam](notebooks/405-paddle-ocr-webcam/)

|

| [405-paddle-ocr-webcam](notebooks/405-paddle-ocr-webcam/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F405-paddle-ocr-webcam%2F405-paddle-ocr-webcam.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/405-paddle-ocr-webcam/405-paddle-ocr-webcam.ipynb) | OCR with a webcam or video file |  |

| [406-3D-pose-estimation-webcam](notebooks/406-3D-pose-estimation-webcam/)

|

| [406-3D-pose-estimation-webcam](notebooks/406-3D-pose-estimation-webcam/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?labpath=notebooks%2F406-3D-pose-estimation-webcam%2F406-3D-pose-estimation.ipynb) | 3D display of human pose estimation with a webcam or video file |  |

| [407-person-tracking-webcam](notebooks/407-person-tracking-webcam/)

|

| [407-person-tracking-webcam](notebooks/407-person-tracking-webcam/)

[](https://mybinder.org/v2/gh/openvinotoolkit/openvino_notebooks/HEAD?filepath=notebooks%2F407-person-tracking-webcam%2F407-person-tracking.ipynb)

[](https://colab.research.google.com/github/openvinotoolkit/openvino_notebooks/blob/main/notebooks/407-person-tracking-webcam/407-person-tracking.ipynb) | Person tracking with a webcam or video file |  |

If you run into issues, please check the [troubleshooting section](#-troubleshooting), [FAQs](#-faq) or start a GitHub [discussion](https://github.com/openvinotoolkit/openvino_notebooks/discussions).

Notebooks with  and  buttons can be run without installing anything. [Binder](https://mybinder.org/) and [Google Colab](https://colab.research.google.com/) are free online services with limited resources. For the best performance, please follow the [Installation Guide](#-installation-guide) and run the notebooks locally.

[]()

## ⚙️ System Requirements

The notebooks run almost anywhere — your laptop, a cloud VM, or even a Docker container. The table below lists the supported operating systems and Python versions.

| Supported Operating System | [Python Version (64-bit)](https://www.python.org/) |

| :--------------------------------------------------------- |:---------------------------------------------------|

| Ubuntu 20.04 LTS, 64-bit | 3.8 - 3.10 |

| Ubuntu 22.04 LTS, 64-bit | 3.8 - 3.10 |

| Red Hat Enterprise Linux 8, 64-bit | 3.8 - 3.10 |

| CentOS 7, 64-bit | 3.8 - 3.10 |

| macOS 10.15.x versions or higher | 3.8 - 3.10 |

| Windows 10, 64-bit Pro, Enterprise or Education editions | 3.8 - 3.10 |

| Windows Server 2016 or higher | 3.8 - 3.10 |

[](#)

## 💻 Run the Notebooks

### To Launch a Single Notebook

If you wish to launch only one notebook, like the Monodepth notebook, run the command below.

```bash

jupyter 201-vision-monodepth.ipynb

```

### To Launch all Notebooks

```bash

jupyter lab notebooks

```

In your browser, select a notebook from the file browser in Jupyter Lab using the left sidebar. Each tutorial is located in a subdirectory within the `notebooks` directory.

|

If you run into issues, please check the [troubleshooting section](#-troubleshooting), [FAQs](#-faq) or start a GitHub [discussion](https://github.com/openvinotoolkit/openvino_notebooks/discussions).

Notebooks with  and  buttons can be run without installing anything. [Binder](https://mybinder.org/) and [Google Colab](https://colab.research.google.com/) are free online services with limited resources. For the best performance, please follow the [Installation Guide](#-installation-guide) and run the notebooks locally.

[]()

## ⚙️ System Requirements

The notebooks run almost anywhere — your laptop, a cloud VM, or even a Docker container. The table below lists the supported operating systems and Python versions.

| Supported Operating System | [Python Version (64-bit)](https://www.python.org/) |

| :--------------------------------------------------------- |:---------------------------------------------------|

| Ubuntu 20.04 LTS, 64-bit | 3.8 - 3.10 |

| Ubuntu 22.04 LTS, 64-bit | 3.8 - 3.10 |

| Red Hat Enterprise Linux 8, 64-bit | 3.8 - 3.10 |

| CentOS 7, 64-bit | 3.8 - 3.10 |

| macOS 10.15.x versions or higher | 3.8 - 3.10 |

| Windows 10, 64-bit Pro, Enterprise or Education editions | 3.8 - 3.10 |

| Windows Server 2016 or higher | 3.8 - 3.10 |

[](#)

## 💻 Run the Notebooks

### To Launch a Single Notebook

If you wish to launch only one notebook, like the Monodepth notebook, run the command below.

```bash

jupyter 201-vision-monodepth.ipynb

```

### To Launch all Notebooks

```bash

jupyter lab notebooks

```

In your browser, select a notebook from the file browser in Jupyter Lab using the left sidebar. Each tutorial is located in a subdirectory within the `notebooks` directory.

[]()

## 🧹 Cleaning Up

[]()

## 🧹 Cleaning Up

1. Shut Down Jupyter Kernel

To end your Jupyter session, press `Ctrl-c`. This will prompt you to `Shutdown this Jupyter server (y/[n])?` enter `y` and hit `Enter`.

2. Deactivate Virtual Environment

To deactivate your virtualenv, simply run `deactivate` from the terminal window where you activated `openvino_env`. This will deactivate your environment.

To reactivate your environment, run `source openvino_env/bin/activate` on Linux or `openvino_env\Scripts\activate` on Windows, then type `jupyter lab` or `jupyter notebook` to launch the notebooks again.

3. Delete Virtual Environment _(Optional)_

To remove your virtual environment, simply delete the `openvino_env` directory:

- On Linux and macOS:

```bash

rm -rf openvino_env

```

- On Windows:

```bash

rmdir /s openvino_env

```

- Remove `openvino_env` Kernel from Jupyter

```bash

jupyter kernelspec remove openvino_env

```

[]()

## ⚠️ Troubleshooting

If these tips do not solve your problem, please open a [discussion topic](https://github.com/openvinotoolkit/openvino_notebooks/discussions)

or create an [issue](https://github.com/openvinotoolkit/openvino_notebooks/issues)!

- To check some common installation problems, run `python check_install.py`. This script is located in the openvino_notebooks directory.

Please run it after activating the `openvino_env` virtual environment.

- If you get an `ImportError`, double-check that you installed the Jupyter kernel. If necessary, choose the `openvino_env` kernel from the _Kernel->Change Kernel_ menu in Jupyter Lab or Jupyter Notebook.

- If OpenVINO is installed globally, do not run installation commands in a terminal where `setupvars.bat` or `setupvars.sh` are sourced.

- For Windows installation, it is recommended to use _Command Prompt (`cmd.exe`)_, not _PowerShell_.

[](#-contributors)

## 🧑💻 Contributors

Made with [`contrib.rocks`](https://contrib.rocks).

[]()

## ❓ FAQ

* [Which devices does OpenVINO support?](https://docs.openvino.ai/2023.3/openvino_docs_OV_UG_supported_plugins_Supported_Devices.html#doxid-openvino-docs-o-v-u-g-supported-plugins-supported-devices)

* [What is the first CPU generation you support with OpenVINO?](https://www.intel.com/content/www/us/en/developer/tools/openvino-toolkit/system-requirements.html)

* [Are there any success stories about deploying real-world solutions with OpenVINO?](https://www.intel.com/content/www/us/en/internet-of-things/ai-in-production/success-stories.html)

---

\* Other names and brands may be claimed as the property of others.

Made with [`contrib.rocks`](https://contrib.rocks).

[]()

## ❓ FAQ

* [Which devices does OpenVINO support?](https://docs.openvino.ai/2023.3/openvino_docs_OV_UG_supported_plugins_Supported_Devices.html#doxid-openvino-docs-o-v-u-g-supported-plugins-supported-devices)

* [What is the first CPU generation you support with OpenVINO?](https://www.intel.com/content/www/us/en/developer/tools/openvino-toolkit/system-requirements.html)

* [Are there any success stories about deploying real-world solutions with OpenVINO?](https://www.intel.com/content/www/us/en/internet-of-things/ai-in-production/success-stories.html)

---

\* Other names and brands may be claimed as the property of others.  | [Blog - How to get YOLOv8 Over 1000 fps with Intel GPUs?](https://medium.com/openvino-toolkit/how-to-get-yolov8-over-1000-fps-with-intel-gpus-9b0eeee879) |

| [SAM - Segment Anything Model](notebooks/237-segment-anything/)

| [Blog - How to get YOLOv8 Over 1000 fps with Intel GPUs?](https://medium.com/openvino-toolkit/how-to-get-yolov8-over-1000-fps-with-intel-gpus-9b0eeee879) |